Usando o JMP Easy DOE no JMP 17

Para quem precisa de um ponto de partida para projetar um experimento, o Easy DOE oferece um guia passo a passo sobre o fluxo de trabalho do DOE, desd...

WesleySantos

WesleySantos

Para quem precisa de um ponto de partida para projetar um experimento, o Easy DOE oferece um guia passo a passo sobre o fluxo de trabalho do DOE, desd...

WesleySantos

WesleySantos

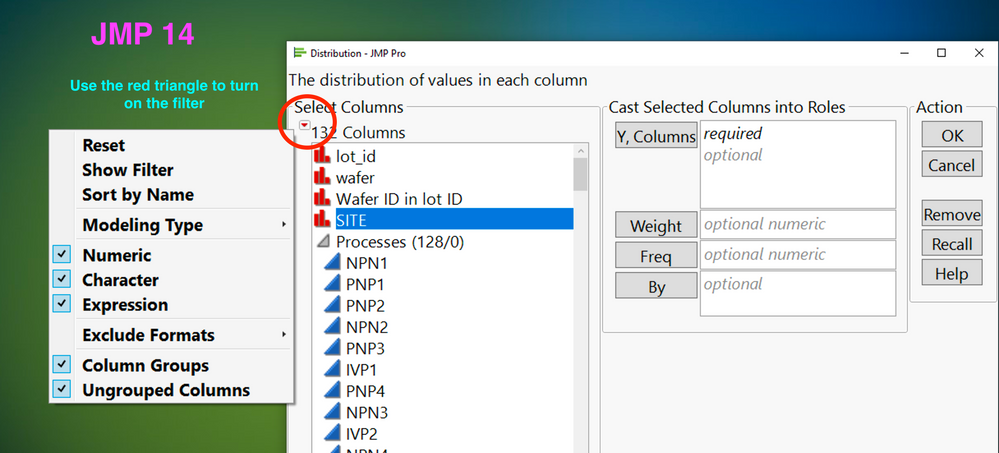

Do you have lots and lots of columns, and struggle to find the right one when you launch an analysis? Find out how to turn on the filter in JMP 14 and...

Laura_Higgins

Laura_Higgins

Keep reading to learn how to create a customized decision tree model that allows you to choose which feature to split the branch over. By doing so, yo...

yasmine_hajar

yasmine_hajar

Jed Campbell led a 45-minute session sharing essential tips and tricks for implementing robust quality management systems - including building a cultu...

Katie-Beth_V

Katie-Beth_V

Aprenda neste vídeo como customizar a opção Workflow Builder do JPM 17. Saiba como melhorar suas análises de forma automatizada.

WesleySantos

WesleySantos

Aprenda neste vídeo o que é a opção Workflow Builder do JPM 17. Saiba como construir e gerenciar relatórios de forma automatizada.

WesleySantos

WesleySantos

Aprenda neste vídeo como acessar gráficos no JMP e usar o graph builder para construir gráficos interativos.

WesleySantos

WesleySantos

Aprenda neste vídeo como importar dados para o JMP. Saiba como criar filtros, organizar seus dados e compartilhar seus resultados.

WesleySantos

WesleySantos

Once you build your models in Python, you can bring them into JMP to visualize, understand, and communicate them. Below are two examples: Building two...

wendytseng

wendytseng

Aprenda neste vídeo como funciona o fluxo de Análise no JMP. Saiba como funciona o menu do JMP e como acessar o help.

WesleySantos

WesleySantos

This six-part web series was designed to help you unlock the power of design of experiments (DOE). For those new to DOE, the series will help you unde...

scott_allen

scott_allen

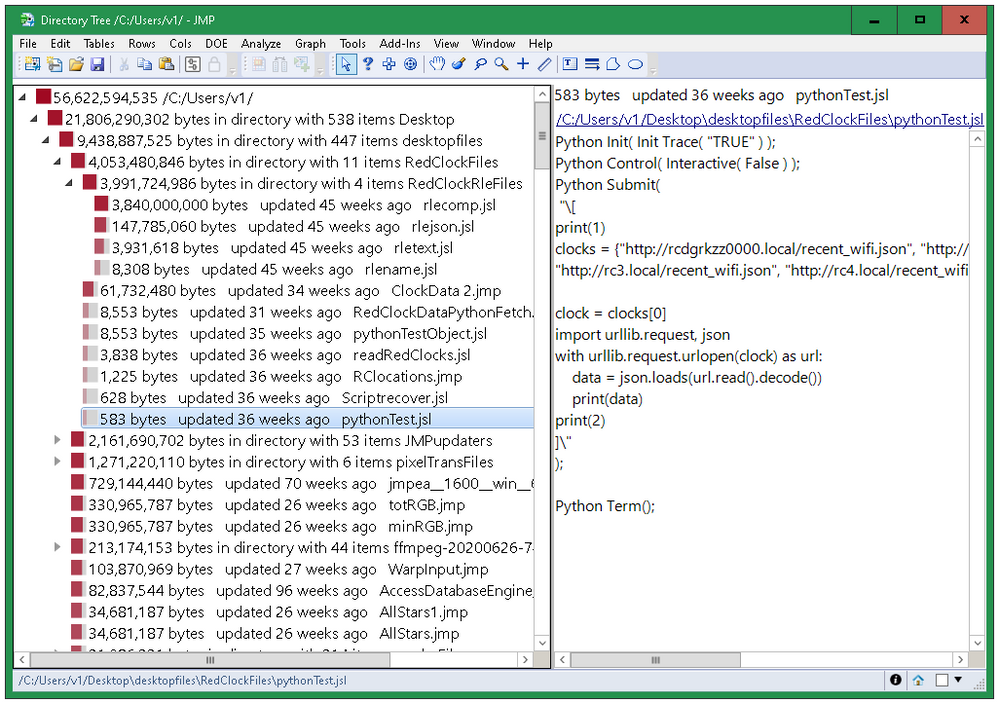

See which folders are using the most space.

Craige_Hales

Craige_Hales

Do you use JMP and Python? Are you using them independently? Python for certain things…JMP for others? Did you know that there are many ways they work...

wendytseng

wendytseng

A group of JMP System Engineers demonstrate how easily JMP and Python can be integrated to handle everyday tasks like cleaning data, and more advanced...

Bill_Worley

Bill_Worley

先日、日本のお客様向けに「JMPを用いた統計的仮説検定入門」という題目でWebセミナーを実施しました。セミナーの特性上、医療や医薬関係に従事している方が多く参加すると思っていたのですが、実際は半導体、電気・電子部品、素材などを開発している製造業の方々も多く参加されました。 このセミナーでは、JM...

Masukawa_Nao

Masukawa_Nao

Is your data obscuring your data labels?

Byron_JMP

Byron_JMP

If you've wondered how to import your database using JMP's embedded Python environment, here are some examples that may help you get started. In the f...

Dahlia_Watkins

Dahlia_Watkins

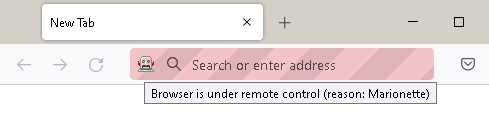

Use JSL to control a web browser.

Craige_Hales

Craige_Hales

In our first of a series relevant to anyone creating or testing software applications, we begin with "What is Software Testing?"

Ryan_Lekivetz

Ryan_Lekivetz