- Learn how to build custom Python data connectors and further customize JMP’s Data Connector Framework with the Python Data Connector Demo, available now in the JMP Marketplace!

- See how to create experiments to support product design and ID useful product features. Register for June 12 webinar, 2pm US Eastern Time.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Retrospective Power Analysis

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Retrospective Power Analysis

Hi,

I've just performed a t-test that was inconclusive, so I was using the retrospective power option to get a feel for whether it's worth performing additional tests.

So, I select Power from the One Way Analysis red triangle and get the retrospective power options.

The table has the alpha, sigma and N direct from the existing data. But the table shows a delta of half the difference in means observed in the test.

The LSN and Powers it gives make more sense using this 'half number' than if put in the observed difference in means.

Can anyone explain to me why it defaults to half the observed difference, is there something to this or is it just a quirk of JMP?

I wondered if it was a number calculated based on the observed difference and some confidence interval or something like that, but would be good to have comments from a proper statistician. I can't find anything in the documentation.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Retrospective Power Analysis

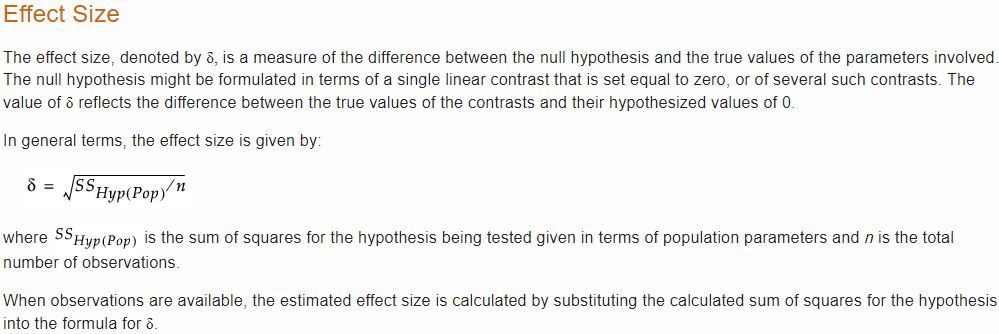

From JMP Help:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Retrospective Power Analysis

Can you help me out with understanding the difference between the effect size delta and the mean value of the test statistic (the difference in means in my two-sample t-test). Surely the difference in means is the best estimate of the true effect size? The difference in means is ~10 and the effect size is ~5. What am I missing?!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Retrospective Power Analysis

The retrospective power analysis is correct, but it is also cumbersome. I suggest that you use a different approach that is more to your way of thinking. Keep the original analysis open for reference.

Select DOE > Design Diagnostics > Sample Size and Power. Click Two Sample Means. The entries in this calculator are what you are thinking. You can, if you choose, use the RMSE from you analysis (assuming model and assumptions are correct) for the Std Dev value. Leave the Extra Parameters value equal to 0. The Difference is the effect as you see it. The rest should be clear enough.

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us