- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Something Like Solver with Nonlinear?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Something Like Solver with Nonlinear?

Hello,

Love this stuff. But having a problem using JMP to solve a problem that I currently do in Excel.

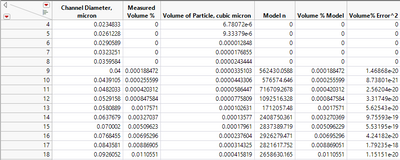

Picture is attached. I have an analytical measurement of volume % of a given particle size on each row. I need to convert each row into a particle count for some other calculations, so I made a model that assigns a particle count to each particle size, and then let Solver make the model predicted volume % match the analytically measured volume %.

In Excel, I assign a "Model n" count of 100000 units in each row, and calculate the volume of those particles in the row. Then I calculate the total volume at the bottom of the spreadsheet, so I can calculate a model volume % in each row.

Next, I calculate the squared error of the (model - analytical) volume percentage in that row, and sum the squares of the error for the entire model. I have Solver minimize this SSE by changing the number of units in each row. Additionally, I constrain the number of units to be greater than or equal to zero.

It fits relatively quickly, and I save the unit counts in each row for further calculation.

So, I've done non-linear fits with different models before, but never one that required both a fit at the row level and as an overall model level. You can't just fit a row individually, as the volume % changes as the other rows change. I don't quite understand how to feed it a parameter for each row, and then solve all the parameters to fit the model, and finally dump the parameters for each row.

Would love to have help on this one. I've banged my head against the wall for some time, but haven't seen the right solution. I will then be applying this to about 1000 samples afterwards....

Thanks in advance! Fred

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Something Like Solver with Nonlinear?

Can you express the relationship analytically? You could use the expression in a column formula as a custom model Nonlinear platform. The default loss function is least squared error.

Can you show a plot of the data?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Something Like Solver with Nonlinear?

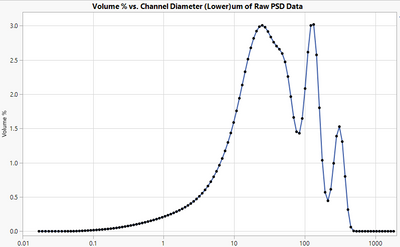

I don't really know if I can turn this into something analytical. The data is not evenly spaced (due to the machine) and logarithmic, and the distributions vary widely. I originally tried to make an analytical function, but came up with strange fits, likely indicating my model wasn't (or couldn't be) well defined. I also found some articles on this instrument indicating that direct translation wasn't possible, but I don't know that they ever tried this method.

This "count simulation" route worked well for me in Excel, but I think JMP should be able to do the same in a much more efficient fashion. Esp. if I have to process thousands of runs. I could code it in VBA with Excel, but I would rather use the right tool.

I've attached one example of a distribution that I would try to match, but like I said, they vary widely.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Something Like Solver with Nonlinear?

You might try a different approach with JMP. Please follow these steps:

- Select Analyze > Reliability and Survival > Life Distribution.

- Select the Volume % column and click Y, Time to Event.

- Click OK.

- Click the red triangle at the top and select Fit Mixture.

- Type 4 in the Quantity box next to Normal.

- Select Separable Clusters for the Starting Value Method.

- Click Go.

Does this approach look promising?

Please see this content in JMP Help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Something Like Solver with Nonlinear?

I tried the Life Distribution analysis, but I really don't understand quite how it helps me. Can you help me on the approach?

Ultimately, I need to get a count for each bucket of particle size. My best approach is to simulate the particle count (on each row) to make my model's volume %'s match the measured volume %'s. So, basically I'll have 124 parameters, limited to being greater than or equal to zero, that will have to be varied to fit the curve. The use of volume % instead of volume forces the objective solver to have to vary all of the parameters at once, not just one at a time (I think), since it needs the total volume of the set to calculate the volume %.

Not sure if this clarifies the question, but it seems like something JMP should be capable of.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Something Like Solver with Nonlinear?

Your example looked like some kind of spectrum with four peaks (two overlapping) so I thought that you want to fit them.

You might look at the Minimize function in Help > Scripting Index > Functions. You would have to use it in a column formula or a script with set up for the arguments.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Something Like Solver with Nonlinear?

Hi Mark,

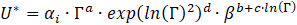

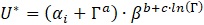

I'm also interested in the question about an iterative solver in JMP. The context is a rheology model that follows a power law in the high-flow regime, with an additive term bounding the low-flow limit. The best I can do in JMP is to use a quadratic model in shear rate -- looks about right graphically, but is non-physical.

Here is the best I can do in JMP using log transforms in "Fit Model" (quadratic in gamma, with a cross term interaction between gamma and beta):

Here is the preferred form derived using Solver in Excel -- gets rid of the quadratic dependence, and captures the regime shift in gamma:

Do you know how I can set up the mixed model in JMP to solve for alpha(i), a, b, and c? Note that U*, alpha, gamma, and beta are all dimensionless parameters. Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Something Like Solver with Nonlinear?

Please see this section of JMP Help about custom non-linear models.

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us