- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- How to get correct and Predictive model

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

How to get correct and Predictive model

Hi, I need your help again.

I've been doing an analysis recently and the contents are simple.

It's data with 376 rows, 223 columns, and single Y value.

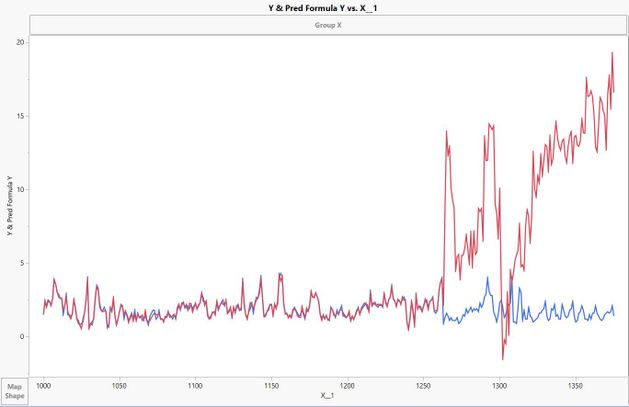

To get the Y-value prediction model, I used 'Fit - Models'(Fit Least squares - standard Least squares / Effect Screening), and I first got the R-Square 0.95, using row 1 to 263.

I added the Predict formula to the 'New column' and compared the estimates from the remaining 264 to 376 with the actual measurements.

As a result, you can see that there is a greater error with new data than below.

I wonder what additional work I can do or take advantage of other features.

I attached my data, thank you.

(FYI, X_1 is just numbering for indexing)

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: How to get correct and Predictive model

You need to provide more context about these variables - that is a lot of potential explanatory variables and they appear to be highly correlated with each other (which makes me think you might want to try something like a principle-components analysis to reduce the features). Also, it looks as if it is time series data - in which case you should analyze it as such. Finally, the first 263 rows certainly behave differently than what comes after - so no matter how good your model is on those rows, that model is not likely to predict the trend that suddenly appears after that row. I would want to focus on whether any of the predictors can pick up on that change in the pattern. It is not likely you can find that by only modeling the 263 rows that don't exhibit that trend. So, you have sort of broken your data into training and test data sets, but your training set looks very different than your test data - that is not a good way to produce a model for the test data. Randomly selecting the training and test data (use a validation column) would be a better approach. But, first and foremost, is this time series data (it looks like it to me)?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: How to get correct and Predictive model

Where to start regarding the question about getting the "correct" predictive model, there are two schools of thought.

1. develop mathematical models based solely on data analysis (e.g., neural networks, PCA)

2. understand, with scientific basis, relationships between input variables and output variable (What Deming Called the analytic problem)

Being deterministic, the 2nd approach is what I prefer. This begins with statements of hypotheses about the relationships between inputs and outputs. It requires an understanding of inference (over what conditions do you want the model to be effective). Then the appropriate "sampling plan" to acquire the data (directed sampling or experimentation). Certainly you can examine historical data, but only to help develop hypotheses which then need to be tested.

Your data set lacks any context (as Dale suggests). There is no "meaning" to the columns, just columns of numbers. I'm not sure why Dale thinks this is a time series as I see no times or dates in the data? So, creating the correct predictive model is left to option 1 above. If you include all of the columns (224) and run Fit Model, you get Rsquare Adj of .59 and Rsquare of .83. These values are way too different which suggests you have over-specified the model (unimportant terms are in the model). If you look at VIFs (Parameter Estimates table) there are many above the threshold of >5 (or >10) which is a measure of multicollinearity. So the model needs to be reduced. However there is no intelligence how to do this as there is no context.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: How to get correct and Predictive model

plus, 'Effect summary' results show that PValue represents 223 rankings, from the smallest to the largest,

I wonder what criteria you can distinguish by trying to determine which variables you can exclude from creating a predictive model.

Using Top 30% ? or Under Pvalue 0.05?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: How to get correct and Predictive model

You need to provide more context about these variables - that is a lot of potential explanatory variables and they appear to be highly correlated with each other (which makes me think you might want to try something like a principle-components analysis to reduce the features). Also, it looks as if it is time series data - in which case you should analyze it as such. Finally, the first 263 rows certainly behave differently than what comes after - so no matter how good your model is on those rows, that model is not likely to predict the trend that suddenly appears after that row. I would want to focus on whether any of the predictors can pick up on that change in the pattern. It is not likely you can find that by only modeling the 263 rows that don't exhibit that trend. So, you have sort of broken your data into training and test data sets, but your training set looks very different than your test data - that is not a good way to produce a model for the test data. Randomly selecting the training and test data (use a validation column) would be a better approach. But, first and foremost, is this time series data (it looks like it to me)?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: How to get correct and Predictive model

Where to start regarding the question about getting the "correct" predictive model, there are two schools of thought.

1. develop mathematical models based solely on data analysis (e.g., neural networks, PCA)

2. understand, with scientific basis, relationships between input variables and output variable (What Deming Called the analytic problem)

Being deterministic, the 2nd approach is what I prefer. This begins with statements of hypotheses about the relationships between inputs and outputs. It requires an understanding of inference (over what conditions do you want the model to be effective). Then the appropriate "sampling plan" to acquire the data (directed sampling or experimentation). Certainly you can examine historical data, but only to help develop hypotheses which then need to be tested.

Your data set lacks any context (as Dale suggests). There is no "meaning" to the columns, just columns of numbers. I'm not sure why Dale thinks this is a time series as I see no times or dates in the data? So, creating the correct predictive model is left to option 1 above. If you include all of the columns (224) and run Fit Model, you get Rsquare Adj of .59 and Rsquare of .83. These values are way too different which suggests you have over-specified the model (unimportant terms are in the model). If you look at VIFs (Parameter Estimates table) there are many above the threshold of >5 (or >10) which is a measure of multicollinearity. So the model needs to be reduced. However there is no intelligence how to do this as there is no context.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: How to get correct and Predictive model

Thank you for your feedback.

First of all, as you said, that's a time series.

As you mentioned, I checked the correlation and reduced the list of variables to 40, and I think I got some satisfactory results.

Since 'Y value' was not measured in real time, the amount of data is not as much as I thought, but I think I was able to determine the cause by applying Parallel Plot, etc.

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us