- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Re: CROSS VALIDATION - VALIDATION COLUMN METHOD

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

CROSS VALIDATION - VALIDATION COLUMN METHOD

When using the validation column method for cross validation , we split the data set into training , validation and test sets. This split ratio is specified by the user. Is there any guideline /reference to decide on the split ratio (such as 60:20:20 / 70:15:15 / 50:25:25 / 80 :10:10). Is it chosen also based on the total number of observations -N ?

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: CROSS VALIDATION - VALIDATION COLUMN METHOD

To your original question, no, there are not specific rules about how much data to leave out. In the JMP Education analytics courses, we advise you to hold out as much data as you are comfortable with, with at least 20% held out. If you feel the training set is too small to hold back that many rows, consider k-fold cross validation. How many rows are you willing to sacrifice to validation? Use k = n / that many rows. If k < 5 using that formula, consider leave-one-out cross validation.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: CROSS VALIDATION - VALIDATION COLUMN METHOD

Hi @sreekumarp,

Interesting question, and I'm afraid I won't have a definitive response regarding your question, as it depends on the dataset, types of model to consider, and practices/habits of the analyst (or person doing the analysis).

First, it's important to know what are the use and needs between each sets :

- Training set : Used for the actual training of the model(s),

- Validation set : Used for model optimization (hyperparameter fine-tuning, features/threshold selection, ... for example) and model selection,

- Test set : Used for generalization and predictive performance assessment of the selected model on new/unseen data.

There are several choices/methods to split your data depending on your objectives and the size of your dataset :

- Train/Validation/test sets: Fixed sets to train, optimize and assess model performances. Recommended for larger datasets.

- K-folds crossvalidation : Split the dataset in K folds. The model is trained K-times, and each fold is used K-1 times for training, and 1 time for validation. It enables to assess model robustness, as performances should be equivalent across all folds.

- Leave-One-Out crossvalidation : Extreme case of the K-fold crossvalidation, where K = N (number of observations). It is used when you have small dataset, and want to assess if your model is robust.

- Autovalidation/Self Validating Ensemble Model : Instead of separating some observations in different sets, you associate each observation with a weight for training and validation (a bigger weight in training induce a lower weight in validation, meaning that this observation will be used mainly for training and less for validation), and then repeat this procedure by varying the weight. It is used for very small dataset, and/or dataset where you can't independently split some observations between different sets : for example in Design of Experiments, the set of experiments to do can be based on a model, and if so, you can't split independantly some runs between training and validation, as it will bias the model in a negative way; the runs needed for estimating parameters won't be available, hence reducing dramatically the performance of the model.

All these approaches are supported by JMP : Launch the Make Validation Column Platform (jmp.com)

As a rule of thumb, a ratio 70/20/10 is often used. You can read the paper "Optimal Ratio for Data Splitting" here to have more details. Generally, the higher the number of parameters in the model, the bigger your training dataset will be, as you'll need more data to estimate precisely each of the parameters in the model, so the complexity/type of model is also something to consider when creating training/validation/test sets.

If you have a more precise use case, maybe this could be more helpful and less general to provide you some guidance ?

I also highly recommend the playlist "Making Friends with Machine Learning" from Cassie Kozyrkov to learn more about models training, validation and testing : Making Friends with Machine Learning - YouTube

Hope this first answer will help you,

"It is not unusual for a well-designed experiment to analyze itself" (Box, Hunter and Hunter)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: CROSS VALIDATION - VALIDATION COLUMN METHOD

Thank you for providing a detailed input on the splitting of the data sets in machine learning. I am sure this will help in my research.

Sreekumar Punnappilly

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: CROSS VALIDATION - VALIDATION COLUMN METHOD

You're welcome @sreekumarp.

If you consider one or several of these answers as solution(s), don't hesitate to mark them as solution(s), to help visitors of the JMP Community to more easily find the answers they are looking for.

If you have more questions or a concrete case on which you would like some advice, don't hesitate to answer on this topic or create a new one.

"It is not unusual for a well-designed experiment to analyze itself" (Box, Hunter and Hunter)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: CROSS VALIDATION - VALIDATION COLUMN METHOD

Thanks.

There are actually two points to be clarified for K-fold cross-validation (CV) and leave-one-out (LOO) validation using routine regression in JMP Pro.

Both methods are directly available in the PLSR part (partial least-squares regression), but there's a certain unclarity what to do and how to visualize the metrics if CV and/or LOO are applied for a simple regression. For example, I'm analyzing spectra and PCR and PLSR are routinely involved in this chemometric process.

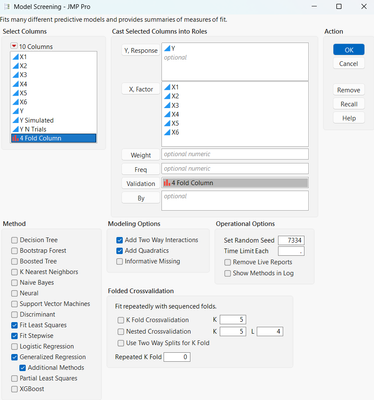

I've got a little strange experience. However, after I create a 4-fold validation column (above, 4 folds across 72 observations) and run PCR (numeric response and principal components as predictors, I receive the following error response):

Hence, there's no real CV performed, right?

The second point: how to build a LOO validation (a column?) for PCR?

As the whole, please, advise me what has to be done to perform adequately cross-validation and leave-one-out validation for a typical regression and get proper metrics of fit? It's really important and unobvious yet to me.

Thanks a lot, colleagues!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: CROSS VALIDATION - VALIDATION COLUMN METHOD

Hi @Nazarkovsky,

It would have been probably easier to start a new post instead of replying to this old post, as it would have provide more visibility and enable other JMP users to join the discussion about your question.

Yes, there are sometimes some errors in JMP modeling platforms when using fixed K-Folds crossvalidation column, as JMP is mostly recognizing 2 levels (training and validation or test) or 3 levels (training, validation and test) from validation column. In the error message you have, no CV is done, a linear model is fit on all your data.

Since you're using a very simple linear model, you can perhaps try to use Model Screening platform (which also includes PLS if you want to compare the different model's results), specifying your X's, your response Y, your 4-folds validation column (or doing the crossvalidation directly from the platform), and the type of model you want to fit (linear regression models options + modeling options):

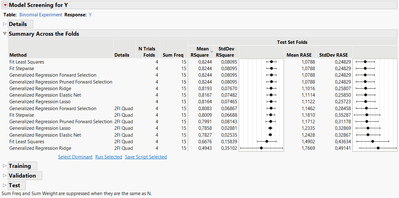

Or directly with the K-folds crossvalidation option from the platform, you can obtain these results:

If you want to run a Leave-One-Out cross-validation, specify simply K=n (n being the number of runs in your table). If you want to have access to individual folds results, you can adapt the solution I provided here to your modeling platform: Accessing out of fold metrics for K-Fold CV

Finally, I see at least one major problem in your modeling workflow that could lead to data leakage : you need to apply the cross-validation or your splitting/validation strategy on your principal component analysis too. If you don't do it, the PCA will see the entire dataset and learn the correlations between the factors from all the dataset, and the linear model you're fitting after (and evaluate thanks to cross-validation) will benefit from the entire information from the dataset, not the information from 3 out of 4 folds. Since JMP hasn't implemented a validation role (yet !) for PCA, this is why I have added this Wishlist, to prevent data leakage and possible errors in modeling workflows like yours: Add validation role option in Principal Component Analysis platform To summarize, you should :

- Define and apply a validation strategy (CV, LOO or standard train/validation or train/validation/test split).

- Preprocess the data only on training set (or preprocess the data several time depending on the folds used)

- Fit a model using the same validation strategy with the preprocessed data from training set (or from the corresponding training folds).

Hope this answer will help you,

"It is not unusual for a well-designed experiment to analyze itself" (Box, Hunter and Hunter)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: CROSS VALIDATION - VALIDATION COLUMN METHOD

Anyway, the section for k-fold crossvalidation in JMP is still weak. I feel curious why it doesn't concern the developers, as this validation method is a primary tool for a preliminary assessment of any model, especially at low number of data. Moreover, in the proper option to build a K-fold CV column, it's impossible to build 3-fold cross-validation - the minimal value is 4.

I also do not like JMP's report of the metrics for CV, since there is no final option/button to build a 'Predicted' column after all the runs as an average result and compare to the predicted values delivered by Generalized Regression or PLSR.

As for the validation of PCA, this option is encountered in Unscrambler, a traditional software for chemometrics (multivariate analysis of chemical data, like spectra). Yes, the validation is scanned across the pre-set numbers of components vs. explained variance. For this test of leakage I use Unscrambler, aha.

There's a series of JMP posts here in Community dedicated to the analysis of spectra, but still short in comprehensive discussion for CV and PCR.

However, in chemometrics, SVR (support vector regression), PLSR, and PCR are always applied and their metrics are compared.

For LOO thanks, it really works properly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: CROSS VALIDATION - VALIDATION COLUMN METHOD

Anyway, the part k-fold crossvalidation in JMP is still weak. I feel curious why it doesn't concern the developers, as this validation method is a primary tool for a preliminar assessing of any model, especially at low number of data. Moreover, in a proper option to build a K-fold CV column, it's impossible to build 3-fold cross-validation - the minimal value is 4.

I also do not like JMP's report of the metrics for CV, since there is no final option/button to build a 'Predicted' column after all the runs as an average result and compare to the predicted values delivered by Generalized Regression or PLSR.

As for the validation of PCA, this option is encountered in Unscrambler, a traditional software for chemometrics (multivariate analysis of chemical data, like spectra). Yes, the validation is scanned acrosse the set numbers of components vs. explained variance. For this test of leakage I use Unscrambler, aha.

There's a series of JMP posts here in Community dedicated to the analysis of spectra, but still short in comprehensive discussion for CV and PCR.

However, in chemometrics, SVR (support vector regression), PLSR, and PCR are always applied and their metrics are compared.

For LOO thanks, it really works properly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: CROSS VALIDATION - VALIDATION COLUMN METHOD

Hi @sreekumarp ,

In addition to what @Victor_G wrote, I would also highly recommend that you split off your Test data set and make a new data table with it. That way when you train and validate your models, you can compare them on the test holdout data table to see which model performs the best. This reduces any chance that the test data set could accidentally be used in the training or validation sets.

There are also other ways that you can use simulated data to train your models and then test the models on the real data. I sometimes use this approach when the original data set is small and I need to keep the correlation structure of the inputs.

Good luck!,

DS

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: CROSS VALIDATION - VALIDATION COLUMN METHOD

Hi @SDF1,

One minor correction to the great addition you provided : validation set is the set used for model comparison and selection, not the test set (but sometimes test and validation names are used alternatively or confusely).

These two sets have very different purposes :

- Test set is the holdout part of data, not used before having selected a model, in order to provide unbiased estimation of model generalization and predictive performance.

- Validation set is a portion of data used for model fine-tuning and models comparison, in order to select the best candidate model.

An explanation is given here : machine learning - Can I use the test dataset to select a model? - Data Science Stack Exchange

Two explanations to this difference in use :

- Practical one : In data science competitions, you don't have access to test dataset, so in order to create and select the best performing algorithm you have to split your data in training and validation set, and "hope" to have good performances (and generalization) on the unseen test set.

- Theoritical one : If you're using test set to compare models, you're actually doing data/information leakage, as you can improve your results on the test set over time by selecting the best performing algorithm on the test set (and then, perhaps continue to fine-tune it based on performance on test data or try other models...). So your test set is no longer unbiased, as the choice of the algorithm (and perhaps other actions done after the selection) will be made on this.

Test set is often the last step between model creation/development and its deployment in production or publication, so the final assessment needs to be as fair and unbiased as possible.

Some ressources on the sets : Train,Test, and Validation Sets (mlu-explain.github.io)

MFML 071 - What's the difference between testing and validation? - YouTube

I hope this may avoid any confusion in the naming and use of the sets,

"It is not unusual for a well-designed experiment to analyze itself" (Box, Hunter and Hunter)

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us