- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

Martin Demel's Blog

- JMP User Community

- :

- Blogs

- :

- Martin Demel

- :

- Updating a JMP data table from a spreadsheet made easy - Part 2

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

On a regular base I hear from JMP users, that they want to update the data table with the new data rather than creating a completely new data table. This can have a variety of reasons: you may have done some data cleaning or recoding already, want to keep your work and previous analysis saved to the data table, or you just simply need to add new data to the existing data table.

In Part 1 of this two-article series I talked about the first scenario - a vertical structured data in a spreadsheet. This is not too complicated, import the new data and concatenate it to the original one (considering the rows already imported).

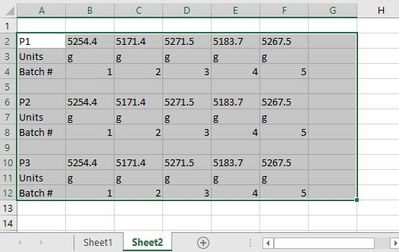

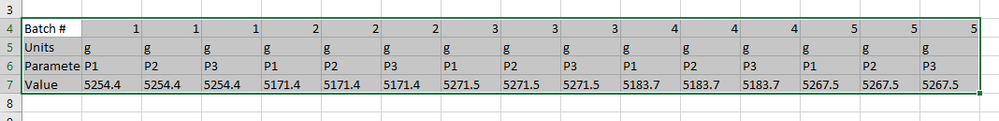

Today I want to talk about a second scenario, which is a bit more complicated. I see this case quite often when looking at customer’s data coming from e.g. labs. The horizontal structured data table:

That is first row/first column is the parameter name, in the following columns is the data. In the second row are the units, in the third row the batch number. Then an empty row and after that the same thing again for another parameter. This is easier to read in a spreadsheet but eventually not very easy to analyze. I often see these kinds of data, although it would be much better to train people to enter data in the vertical way than trying to work around with the horizontal structure.

How can you import and update a table like this in JMP? What’s so difficult with that data?

We have to understand the challenges.

- First, the data is arranged in rows, but more a challenge is that there are multiple rows showing similar information. Parameters (P1, P2, P3), Units and same Batch number repeated for each parameter

- Second, we have empty rows to divide the data for easier interpretation what belongs to what

For a data analysis this kind of structure is nothing a machine would love, you would need to specifically tell where what data is located and do the appropriate analysis. If new data is added to the right, you need to adjust the analysis’ data ranges appropriately.

Due to these challenges we need to process the data before we come to where we want to be.

Let's start to create the template table we want to update

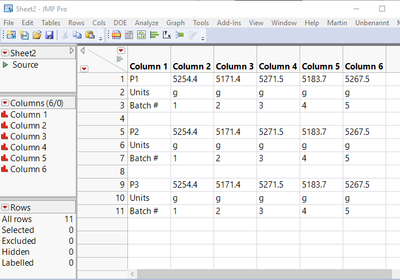

1. Access the current data from the spreadsheet as it is:

2. This is actually not the table we want to have, so we rename it to “help” and need to remove the empty rows, as preparation for transposing the data, in coding this can look like this:

dt_h1 << Set Name( name_worksheet || "help" );

// Remove rows with only missing values (break lines between measurements)

n = Loc( Column( 1 ) << Get Values, "" );

dt_h1 << Select Rows( n );

dt_h1 << Delete Rows;

- Note: I used the very useful LOC command to locate the empty rows to be deleted

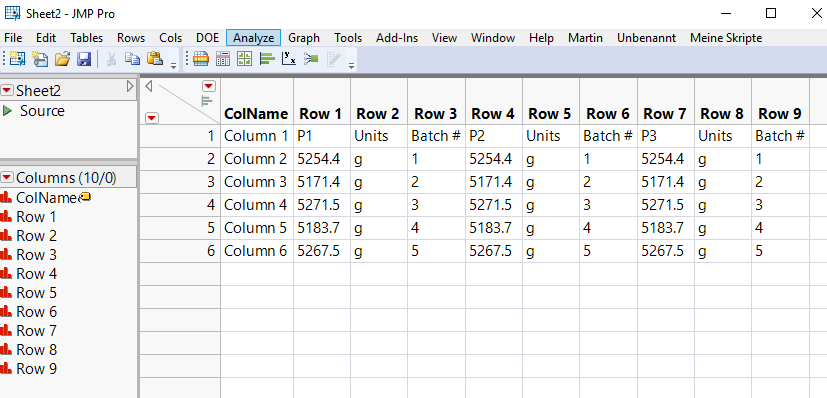

3. The first column has the actual column names we want to have in the final data. We want to save them for later usage. Then we can transpose the table, provide it the final table name (as the worksheet – as I will refer to the table name to automatically find the corresponding worksheet in the spreadsheet):

// Get names used for column names after transposing the data table

NewNames = dt_h1:Column 1 << Get Values;

// Transpose Column

dt_final = Data Table( name_worksheet || "help" ) << Transpose(

columns( 1 :: N Cols( dt_h1 ) ),

Label column name( "ColName" ),

Output Table( name_worksheet )

);

4. We now need to make some changes to the data table:

- Remove the first column

- Remove the first row

- Change the column names

- And finally set the correct data and modeling types (due to the first row all has been set to character), and save the file

- For this example also remove the last two rows so we can test the update script without adding columns to the spreadsheet

- Note: based on your system’s localization settings as well the Office language you might need to take care of the preference settings in JMP or the decimals/commas first (e.g. with recode) before changing the data and modeling type

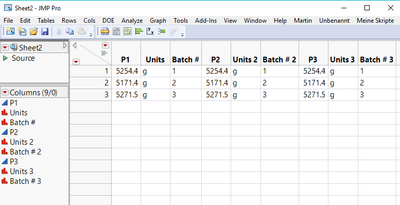

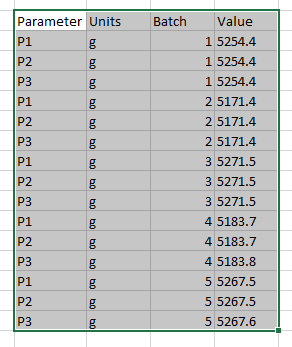

The final table should look like this:

Now let’s take care of the update script

Most of what you need has been done already and saved into scripts automatically:

- The import source script

- The transpose source script

- Some of the manipulations mentioned above with script examples

Let’s take a look at the final update script we will put into this template table as a script:

1. Import worksheet with name of the current data table

// Read in Sheet2 of the Excel workbook

excelFile = "TransposedExcelWorksheet.xlsx";

// Get the name of the data table and number of existing rows

cdt = Current Data Table();

cdtName = cdt << get name();

n_Rows = N Rows();

// Import the data invisible

dt_h1 = Open(

excelFile,

Worksheets( cdtName ),

Use for all sheets( 1 ),

Concatenate Worksheets( 0 ),

Create Concatenation Column( 0 ),

Worksheet Settings(

1,

Has Column Headers( 0 ),

Number of Rows in Headers( 1 ),

Headers Start on Row( 1 ),

Data Starts on Row( 1 ),

Data Starts on Column( 1 ),

Data Ends on Row( 0 ),

Data Ends on Column( 0 ),

Replicated Spanned Rows( 1 ),

Replicated Spanned Headers( 0 ),

Suppress Hidden Rows( 1 ),

Suppress Hidden Columns( 1 ),

Suppress Empty Columns( 1 ),

Treat as Hierarchy( 0 ),

Multiple Series Stack( 0 ),

Import Cell Colors( 0 ),

Limit Column Detect( 0 ),

Column Separator String( "-" )

),Invisible

);

2. Make modifications as described above for the template table, transpose and clean up the data table

dt_h1 << Set Name( cdtName || "help" );

// Remove rows with only missing values (break lines between measurements)

n = Loc( Column( 1 ) << Get Values, "" );

dt_h1 << Select Rows( n );

dt_h1 << Delete Rows;

// Get names used for column names after transposing the data table

NewNames = dt_h1:Column 1 << Get Values;

// Transpose Column

dt_final = Data Table( cdtName || "help" ) << Transpose(

columns( 1 :: N Cols( dt_h1 ) ),

Label column name( "ColName" ),

Output Table( cdtName )

);

// Clean up data table - delete first row of column names and first column (labels)

dt_final << Select Rows( 1 );

dt_final << Delete Rows();

Column( dt_final, "ColName" ) << Set Selected;

dt_final << Delete Columns();

// Set the Column Names appropriately based on deleted first row in this table

For( i = 1, i <= N Cols( dt_final ), i++,

col = Column( i );

col << Set Name( NewNames[i] );

);

3. Remove rows already imported and append rest to the original data table with concatenate:

// remove rows already imported

dt_final << Select Rows( 1 :: n_Rows );

dt_final << Delete Rows();

// Append new data to original data table

cdt << Concatenate( dt_final, Append to first table );

// Close help data table

Close( dt_final );

Close( dt_h1 );

// this last bracket is to close the whole script!

);

- Note: You do not have to take care of the data and modeling type, as JMP already knows how to treat the data due to the existing column properties.

Adding this script to the template data table will allow you to simply press one button to get the new data from a horizontal structured data in a spreadsheet.

Conclusion

This involved quite a bunch of steps, some easy to do, some more advanced. The nice thing is that you can do it in JMP. However, always remember:

Data preparation is what requires most time in extracting insights from your data!

If you want to work with the data, use a structure tailored for data analysis.

That is most of the time a vertical approach, where each column stands for one parameter. But also a row based approach can be done, but then please in a correct one.

To provide two examples which would ease the analysis:

- Note: adding a new column for the Parameter name removes the burden of rearranging the imported data. And you have a grouping variable you could use as an overlay or by group in the analysis platforms. This avoids running the same analysis for each column, but does it at once.

Of course you can use this and extend it by adding analysis to the data, like updating a control chart or other trend analysis. Using Windows Scheduler you might want to then update your report and send it to our new product JMP Live, so other people (not necessarily licensed JMP users) can access the reports in a web browser with the interactivity of JMP like data filter, column switcher, and so forth.

Please feel free to add your use cases and or approaches to do something similar. I’m happy to take a look at.

All the best,

Martin

PS: Three comments to the files:

- In Sheet2.jmp you will find a script for updating the data table with the attached Excel File. Both files have to be saved in the same folder (this was for simplicity, you can easily change the hard coded File Name with e.g. Pick File(), and also extract the name.

- The second script is "Remove last two rows" which allows to remove rows in the data table to update again from the Excel Sheet, without having to manually delete the rows. So all for your convenience

- The ScriptUpdateHorizontal.jsl will create the initial source table with the update script and remove last 2 rows scripts added to the data table. This script also refers to the first part of the blog, so you can follow along.

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.