- Learn how to build custom Python data connectors and further customize JMP’s Data Connector Framework with the Python Data Connector Demo, available now in the JMP Marketplace!

- See how to use Accelerated Life Testing (ALT) to evaluate reliability. Register for June 5 webinar, 2pm US Eastern Time.

Martin Demel's Blog

- JMP User Community

- :

- Blogs

- :

- Martin Demel

- :

- JMP and Excel - A never ending story? - Part 1 - cont.

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

In my previous blog post JMP and Excel - A Never ending story? - Part 1 I ended with an example importing spreadsheets with hierarchical headers and merged cells. This time I focus on importing a spreadsheet file with many worksheets as well reading multiple spreadsheets (laying in a specific folder) into JMP. I do not want to make it overcomplicated, therefore I assume the structure and parameter names in the spreadsheets as well in the different worksheets are the same!

(Note: If not the same you would have to specify the settings for each workbook and import them as separate tables. Combining them afterwards could be done by using the join/concatenate features or the JMP Query Builder for JMP data tables. If you want to automate these kind of steps usually further scripting is required to check for the role for every variable, worksheet and/or file you want to read in).

MULTIPLE WORKSHEETS - IMPORT AND AUTOMATE

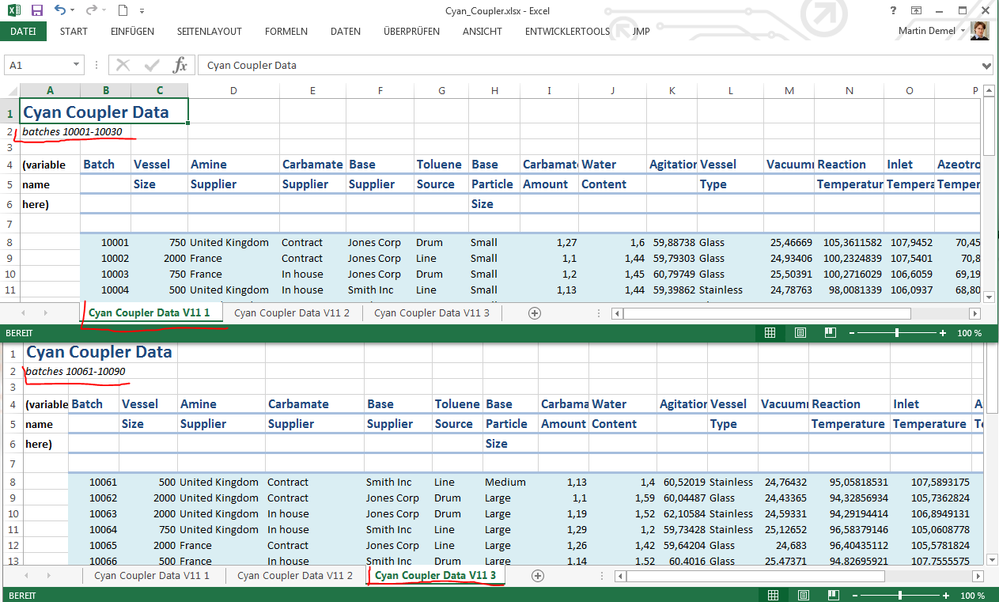

So let’s look at the data in Excel first:

One can see the different workbooks, all having the same shape and non-trivial structure. Underlined in red one can see the different batch numbers on each workbook. I want to read in the data but not in separate tables. Rather I would like to have one single table and using JMP’s Excel Import Wizard this is quite easy to achieve:

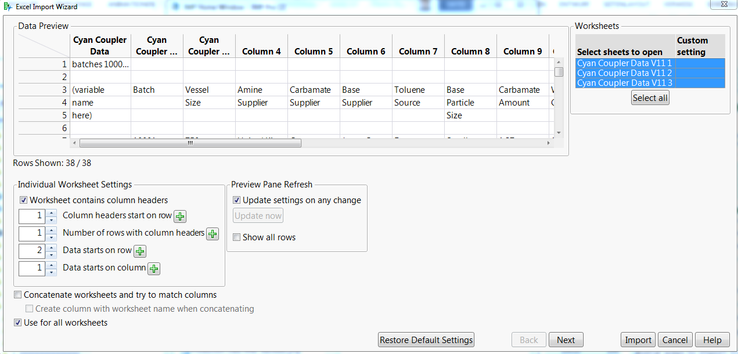

- Drag the Excel Sheet into any JMP Window will open the Excel Import Wizard:

- Make changes to the settings for the header and data fields where appropriate. We have a header we don’t want to include in the data, and the actual header starts in line 4 and spans over three rows. Then there is a row with empty fields. Therefore we start the data one line later (8 instead of 7). The first column does not have data for the analysis, that’s why we skip it.

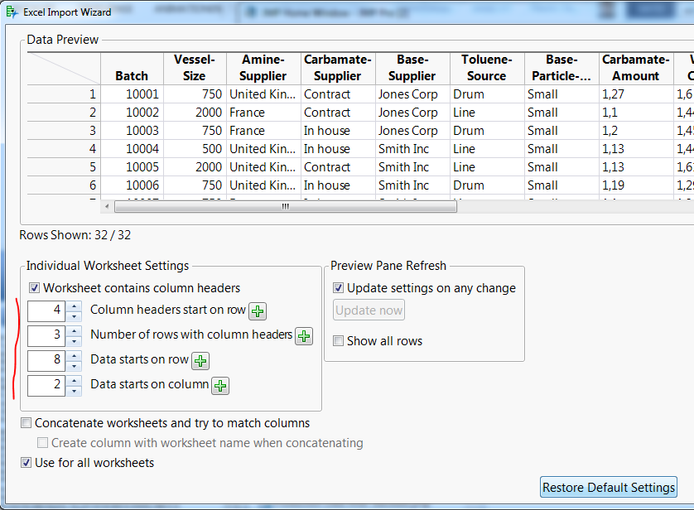

- Clicking "Next" button we can make use of Last row setting, telling that we do not want to include the time stamp information. Selecting the row where the data ends and pressing the plus (+) sign you do not have to care about what line number is the correct one.

- The remaining thing is to make use of the same structure within all the worksheets. We can check the “Use for all worksheets” as well the “Concatenate worksheets and try to match columns” marker. This will change the preview from 30/30 rows to 90/90 rows. Considering that each of the three worksheets provide 30 rows this seems to work as expected. When you concatenate, it is strongly recommended to check also the “Create column with worksheet name when concatenating” flag. Otherwise it will be hard to reflect later where the data came from.

- Last step is to import and of course always check the imported data

If you want to see this in action I can recommend this short video (using JMP 12). A note in case you just started using the Import Wizard: It will save the last setting, which may lead to surprising views on the data, when importin new, but different data. Using the button “Restore Default Settings” you can reset your settings in the wizard.

In each imported data table you’ll find a source script to redo the import with the settings you previously have chosen. This allows for reading an updated data table, in case you got new batch data in the spreadsheet. Well, when collecting data frequently I probably would consider using databases rather than spreadsheets, but that’s a different story.

AUTOMATE IMPORTING MULTIPLE SPREADSHEETS - DIFFERENT CHALLENGE

Now you know how to read one spreadsheet with multiple worksheets with the same structure into JMP. However, you may have also faced the challenge “How do I read multiple spreadsheets with the same structure into JMP without manually do this for each file, aka doing it automatically. The short answer, using JMP Scripting Language. Using the Source Script as base you can either rerun this script for each spreadsheet, or you use a for loop looping through all files in a folder. There is a script shared in the community File Exchange you could use (maybe with some adjustments), which exactly does this: Combine-multiple-txt-or-csv-files

In short you do the following steps (just parts of the script shown):

- Pick the directory and filter the files that aren’s Excel files

prefilepath = munger(Pick Directory( "Browse to the Directory of the Target Excel Files " ),1,"/",""); filepath = Convert File Path( prefilepath, Windows ); prefilelist = Files In Directory( filepath ); n2=nitems(prefilelist); //filter out any non-Excel files For( i2 = 1, n2 >= i2, i2++, file=(prefilelist[i2]); If( Item( 2, prefilelist[i2], "." ) == "xls" | Item( 2, prefilelist[i2], "." ) == "xlsx", Insert Into( filelist,file), //show(file) ) ); - Loop through the files and import them. To save memory open just two and update the first imported table with the new one, close the latter and open the next. Otherwise you may have issues on Windows because of the number of window handles used as well as of memory used (in case of many or very large spreadsheets.

-

n=nitems(filelist); //number of items in the working list cctable= New Table( "Combined data table");//make an empty table ExcelSheetName = "Sheet1"; // change this if you have a special sheet name you want to read the data from //Iterate through building the SQL string to query the Excel files For( i = 1, i < n+1, i++, filenow = ( filelist[i] ); fileopen=(filepath||filenow); dt=open(fileopen, Worksheets( ExcelSheetName ) ); dt << New Column( "File Name", formula( filenow ) ); cctable << Concatenate( Data Table( dt ), Append to first table ); Close( dt, NoSave ); );//end of for loop

So far you have read about several ways to import trivial structured data, as well how to handle hierarchical and/or merged data sets using the Excel Import Wizard in JMP. Finally I showed you ways to import multiple worksheets at once and how to automatically read multiple Excel sheets with a JSL Script. In my next post I will talk about potential challenges based on the format of the data and others.

I’m looking forward to your comments and shared experiences.

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.