Real-life data sets usually have all kinds of traps, pitfalls, and gotchas, much like western Canadian weather, waves, and bears. How and where exactly do we start to handle these data cleaning and data preparation tasks?

Real-life data sets usually have all kinds of traps, pitfalls, and gotchas, much like western Canadian weather, waves, and bears. How and where exactly do we start to handle these data cleaning and data preparation tasks?

Several years ago, I went on a Canadian wilderness adventure with two of my good friends, Steve and Aaron, father and son, both experienced Canuck woodsman. We loaded all of our gear into Aaron’s well-worn pickup and drove straight north through the amazing fiords of British Columbia, disembarking in a remote and pristine alpine gorge with walls of aromatic evergreens surrounding a long, deep, crystal-clear lake.

As we set out on the water, a deep feeling of serenity and escape was overtaken by an adrenaline surge as the weather turned suddenly from warm and sunny to nasty, cold, hard wind and rain. Our kayak and canoe nearly capsized in the whitecaps, and we had to paddle furiously to shore. After drying out and regrouping, the next four days were spectacular; we did not see another soul the entire time, but witnessed an amazing menagerie of wild fowl and moose, savored delectable fresh kokanee pan-cooked on an open flame, cliff jumped into the icy water, and rewarmed for hours in natural hot springs. We attracted no grizzlies thanks to Aaron’s deft sealing and hanging of our thoroughly cleaned provisions well away from camp each night. The weather did catch us a few more times, and I recall shivering to the bone until finding safe shelter.

Due to a few unexpected delays while returning, Steve and Aaron hurriedly and kindly dropped me off at my Vancouver hotel downtown since my Joint Statistical Meetings talk was in the first session. Several of my colleagues were checking in at the same time, and had to do triple takes before saying "hi" since I was completely roughed out and unshaven with all of my dirty gear in tow. I don’t recall a warm shower in the room ever feeling as good as that one, and it also felt great to reengage with stat friends at the conference buoyed by a natural high from the great outdoors.

I find myself now returning from a long journey of a different sort, having made an all-in effort to explore the choppy waters between applied statistics and data science. My two-year excursion has entailed a deep dive into data science competitions at Kaggle and DREAM, and I am grateful to these organizations for setting up in such engaging formats. I would also like to extend a huge appreciation to my family, friends and co-workers during countless hours of “absence.” Quite a few folks have been encouraging me to share success stories and lessons learned, and this long-winded blog is a first attempt at this.

A natural place to begin is data cleaning and preparation. Building something worthwhile requires getting dirty and making a big mess, right kids? We then need to clean up. This is true of good data sets for sure, and even well-prepared competitions have numerous problems. Real-life data sets are usually worse, with all kinds of traps, pitfalls and gotchas, much like western Canadian weather, waves and bears. How and where exactly do we start to handle these?

Divide and conquer, exploring one step at a time. One nice new tutorial along these lines is available from the recent 5-Day Data Cleaning Challenge from Rachael Tatman at Kaggle. It’s a friendly, in-depth treatment of several the main cleaning topics using several interesting data sets (real estate, kickstarters, natural disasters, suicide bombings) as hands-on detailed examples in Python notebooks. Kudos to Rachael and colleagues for taking the time to put these together in an order and flow that makes sense.

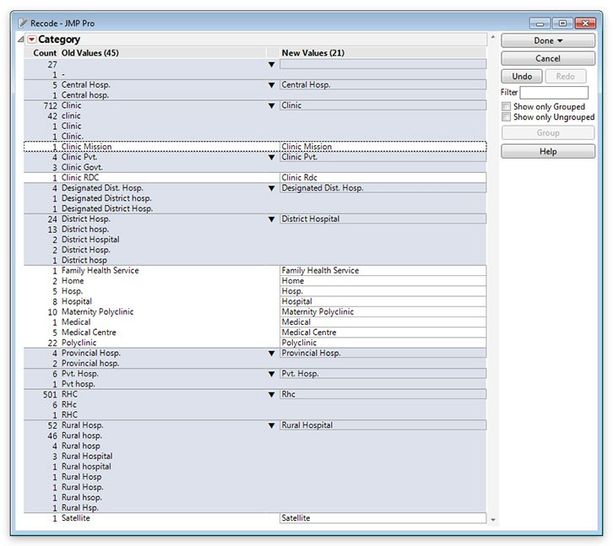

Pushing toward the destination of truly deep, effective and efficient data cleaning, I have found visual interactive exploration and functional point-and-click with a mouse to be extremely helpful. My tool of choice here is JMP. It has been my secret weapon for success in Kaggle and DREAM challenges with tabular data. It has a unique and intricate integration of graphics and statistics not found in either pure-play visual packages or data analysis languages like Python or R. With mouse-driven visual interaction and an immediately handy and rich library of analyses, I can very quickly uncover hidden problems and clean dirty data, often much more efficiently than directly writing code. Furthermore, being a thick client, JMP is instantaneously responsive to mouse actions across multiple linked graphs and built-on-the-fly dashboards, essential for uninterruptedly immersing yourself in your data and discovering sometimes nefariously embedded grime. JMP is my go-to first-look tool for any new tabular data set, even when I intend to write custom analytics code.

JMP is my go-to first-look tool for any new tabular data set, even when I intend to write custom analytics code.

JMP is my go-to first-look tool for any new tabular data set, even when I intend to write custom analytics code.

Hang on though, one big problem: Who really likes to clean anything? One glance at my desk, computer desktop, and dozens of open browser tabs reveals that it’s usually not my top priority. Can’t someone else or some AI-bot do this for us so we can get to the fun stuff of ensembling gradient boosted tree models or tuning a deep learning architecture? Alas, every data set brings its own different set of messy problems requiring thoughtful human attention. I’ve found a secret here to be strong curiosity about really understanding your data and then tidying up along the way as you discover things, all with an adventurous spirit and confidence that deep cleaning nearly always leads to better deep learning. Here again, the mouse can be mighty and even make this fun compared to taking the time and energy to always type and debug one-off code with your keyboard.

I have put together a detailed cleaning checklist that I use whenever looking at a new data set specifically for predictive modeling and hope to share that relatively soon along with examples. In addition, here are some links to some great JMP data cleaning materials from my colleagues Don McCormack, Olivia Lippincott, Rob Carver, and Trevor Bihl: