- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Why do I get difference betwen excel and jmp when doing least square regression?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

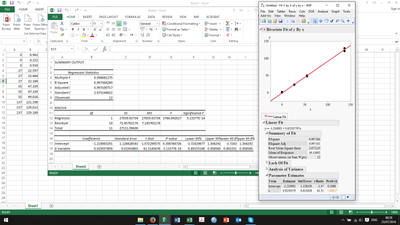

Why do I get difference betwen excel and jmp when doing least square regression?

Hi,

I'm doing what is basic least square regression on 12 points (4 points in triplicate) but I get different results between excel and JMP. Can anyone offer an explanation? Thanks

| Result | Excel | JMP |

|---|---|---|

| Intercept | -1.30885 | -1.210003 |

| Slope | 0.926018 | 0.9250579 |

| UCL (99.7%) at intercept | 3.110484 | 10.0827... |

| R2 | 0.9973367 | 0.997364 |

for data the data below:

| X | Y |

|---|---|

0 | 0.942 |

| 0 | 0.152 |

| 0 | 0.918 |

| 27 | 22.597 |

| 27 | 23.464 |

| 27 | 22.184 |

| 55 | 47.329 |

| 55 | 47.329 |

| 55 | 49.018 |

| 137 | 121.108 |

| 137 | 129.013 |

| 137 | 129.189 |

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Why do I get difference betwen excel and jmp when doing least square regression?

hi johnhart1,

I would guess you made a typo or missed and observation. I get the same results.

best,

ron

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Why do I get difference betwen excel and jmp when doing least square regression?

Quick addendum: I get the same result as excel when using the JMP excel pluggin but different when putting the numbers directly into JMP!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Why do I get difference betwen excel and jmp when doing least square regression?

hi johnhart1,

I would guess you made a typo or missed and observation. I get the same results.

best,

ron

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Why do I get difference betwen excel and jmp when doing least square regression?

Friends don't let friends use Excel for statistics! There are many scholarly articles available about the statistical problems with Excel. Microsoft knows about them, yet won't fix them. Let the consumer beware.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Why do I get difference betwen excel and jmp when doing least square regression?

In this case, my guess is that the data in Excel has greater precision then the 3 decimal places shown in the original post.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Why do I get difference betwen excel and jmp when doing least square regression?

Hi,

I checked that bit and changed all the data to be strictly the same amount of decimal places

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Why do I get difference betwen excel and jmp when doing least square regression?

mmmhhhhh I get the same answer as well now....... something strange is going on somewhere.

Anyway! Thanks for the help!

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us