- Learn how to build custom Python data connectors and further customize JMP’s Data Connector Framework with the Python Data Connector Demo, available now in the JMP Marketplace!

- See how to use Accelerated Life Testing (ALT) to evaluate reliability. Register for June 5 webinar, 2pm US Eastern Time.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Scripting to interpolate missing time series weather data?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Scripting to interpolate missing time series weather data?

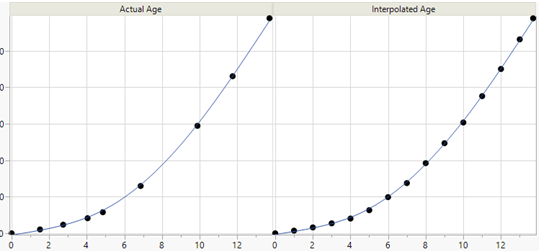

With hourly interval weather data there are occasional missing data points (missed reads). I’d like to interpolate missing data point(s) – temperature, humidity, barometric pressure typically change gradually during a day. I’d like to interpolate between the last and next time-series value in a column to get the missing value(s) to clean the data. Imputation using the “Explore Missing Values” provides meaningless results looking across rows, I need a simple way to fill in the missing points in columns for use with other calculations like heating degree days, cooling degree days, etc.

Conceptually the logic seems straightforward: when encountering a missing value in the next row of a column, then count how many missing data points until the next value, then interpolate based on the number of missing data points. The logic seems to be: check a column for missing data, with missing data then count the missing intervals, then fill in the missing intervals with (next value - last value / number of missing intervals) added to the last value.

Anyone with better scripting expertise than I have a solution?

Has this already been solved? Or is there functions already built into JMP?

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Scripting to interpolate missing time series weather data?

hi jthornton1,

in the past i did something similar to what you mention. it is not the fastest or simplest but it could do the job.

once you have a duration column and a difference column (and the time you had from the source) you can complicate the imputation formula as much as you like.

Names Default To Here( 1 );

// open original data set

dt = Open( "$SAMPLE_DATA/Time Series/CO2.jmp" );

// delete about 25 random rows

For( i = 1, i <= 25, i++,

x = Random Integer( 161 );

dt:co2[x] = .;

);

// at this point you just have a data with missing values.

// add a column for time - if you have one you do not need it.

dt << New Column( "t" );

For Each Row( :t = Row() );

// here is where you start

// subset the original table and delete the rows with missing values.

dtSub = dt << Subset( All rows, columns( :CO2, :t ) );

dtSub << delete rows( dtSub << get rows where( Is Missing( :co2 ) ) );

// introduce the duration column

dtSub << New Column( "Duration" );

For Each Row( :Duration = Lag( :t, -1 ) - :t );

// introduce the change in co2 from row to row.

dtSub << New Column( "co2 change" );

For Each Row( :Name( "co2 change" ) = Lag( :Name( "co2" ), -1 ) - :Name( "co2" ) );

// update the duration and difference to the original table;

dt << update( with( dtSub ), Match Columns( :t = :t ) );

// close the subset table

Close( dtSub, no save );

// fill the missing rows - need for the next step

For Each Row( :Duration = If( Is Missing( :Duration ), Lag( :Duration, 1 ), :Duration ) );

For Each Row( :Name( "co2 change" ) = If( Is Missing( :Name( "co2 change" ) ), Lag( :Name( "co2 change" ), 1 ), :Name( "co2 change" ) ) );

// now you can fill in the missing values with a formula into a new column or in to the existing one

dt << New Column( "co2 full" );

For Each Row(

:Name( "co2 full" ) = If(

Row() == 1, :CO2,

:Duration == 1, :CO2,

:Duration > 1, Lag( :Name( "co2 full" ), 1 ) + (:Name( "co2 change" ) / :Duration)

)

);

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Scripting to interpolate missing time series weather data?

Regarding Ron's suggestion above, I just wanted to pint out that 'RandomIndex()' will remove the uncertainty about how many values have been decimated. So the first few lines become:

dt = Open( "$SAMPLE_DATA/Time Series/CO2.jmp" );

// Set n CO2 values to missing

n = 25;

dt:CO2[RandomIndex(NRows(dt), n)] = .;

Regarding the method in general, then please see also: https://community.jmp.com/message/217292#217292

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Scripting to interpolate missing time series weather data?

A lot of the comments seem to have ignored that you have time series data. Presuming your data exhibits autocorrelation you can use the JSL function INTERPOLATE. Or SPLINE SMOOTH if you need nonlinear interpolation.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Scripting to interpolate missing time series weather data?

Hi, jthornton1!

I usually start to worry when my logic seems straighforward!

Your interpolation idea would seem to suffer some extreme bias if 24 straight hours were missing in a day. I assume your interpolation of temperature from midnight to midnight, for example, would miss the warming effects of the Sun during the day. Even with only a few points missing, the method you propose (called Single Imputation) doesn't properly reflect the uncertainty about the predictions of the unknown missing values, and the variances of the parameter estimates from any analysis of those data will be incorrectly biased toward zero. This is a problem even if you use some statistic (mean, median, etc.) from each time period of the data that are complete.

I think the current state-of-the-art for an approach to deal with your issue is Multiple Imputation. Instead of filling in a single value for each missing datum, multiple imputation replaces each missing value with a set of plausible values that represent the uncertainty about the correct value to impute. The imputed data sets are each analyzed using standard procedures for complete data and the results from these analyses are subsequently combined. Multiple imputation doesn't attempt to estimate each missing value in and of itself, but instead attempts to represent a random sample of plausible missing values. This process results in statistical inferences that more properly reflect the uncertainty due to missing values. For example, you can get justifiably valid confidence intervals for parameters.

SAS has some procedures that implement Multiple Imputation very effectively. JMP integrates well with SAS. Good luck!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Scripting to interpolate missing time series weather data?

hi jthornton1,

in the past i did something similar to what you mention. it is not the fastest or simplest but it could do the job.

once you have a duration column and a difference column (and the time you had from the source) you can complicate the imputation formula as much as you like.

Names Default To Here( 1 );

// open original data set

dt = Open( "$SAMPLE_DATA/Time Series/CO2.jmp" );

// delete about 25 random rows

For( i = 1, i <= 25, i++,

x = Random Integer( 161 );

dt:co2[x] = .;

);

// at this point you just have a data with missing values.

// add a column for time - if you have one you do not need it.

dt << New Column( "t" );

For Each Row( :t = Row() );

// here is where you start

// subset the original table and delete the rows with missing values.

dtSub = dt << Subset( All rows, columns( :CO2, :t ) );

dtSub << delete rows( dtSub << get rows where( Is Missing( :co2 ) ) );

// introduce the duration column

dtSub << New Column( "Duration" );

For Each Row( :Duration = Lag( :t, -1 ) - :t );

// introduce the change in co2 from row to row.

dtSub << New Column( "co2 change" );

For Each Row( :Name( "co2 change" ) = Lag( :Name( "co2" ), -1 ) - :Name( "co2" ) );

// update the duration and difference to the original table;

dt << update( with( dtSub ), Match Columns( :t = :t ) );

// close the subset table

Close( dtSub, no save );

// fill the missing rows - need for the next step

For Each Row( :Duration = If( Is Missing( :Duration ), Lag( :Duration, 1 ), :Duration ) );

For Each Row( :Name( "co2 change" ) = If( Is Missing( :Name( "co2 change" ) ), Lag( :Name( "co2 change" ), 1 ), :Name( "co2 change" ) ) );

// now you can fill in the missing values with a formula into a new column or in to the existing one

dt << New Column( "co2 full" );

For Each Row(

:Name( "co2 full" ) = If(

Row() == 1, :CO2,

:Duration == 1, :CO2,

:Duration > 1, Lag( :Name( "co2 full" ), 1 ) + (:Name( "co2 change" ) / :Duration)

)

);

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Scripting to interpolate missing time series weather data?

Regarding Ron's suggestion above, I just wanted to pint out that 'RandomIndex()' will remove the uncertainty about how many values have been decimated. So the first few lines become:

dt = Open( "$SAMPLE_DATA/Time Series/CO2.jmp" );

// Set n CO2 values to missing

n = 25;

dt:CO2[RandomIndex(NRows(dt), n)] = .;

Regarding the method in general, then please see also: https://community.jmp.com/message/217292#217292

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Scripting to interpolate missing time series weather data?

The simplest solution might be to use the Fit-Y-By-X platform if the missing data is occasional. If you put you time column as X (needs to be numeric continuous) and the weather variables as Y. You can then fit a spline from the hotspot menu (you will need to experiment with the value of Change Lambda to use).

From the spline hotspot pick save predicted. These new columns will have the missing values filled in which you can do you calculations on (or you could do a bit of scripting to use the new value only where the originals are missing). Since weather data is often a gradual change this should give reasonable imputation.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Scripting to interpolate missing time series weather data?

....how about scripting the spline fit?

G

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Scripting to interpolate missing time series weather data?

Just because you can do something doesn't mean you should!

It's still unclear exactly what information you wish to make from your data, but if you perform any inference (hypothesis testing, confidence intervals, etc.) on the data with the single imputations, you should be aware that you have biased all the variances of the parameter estimates towards zero, affecting the quality of any inferences subsequently performed on the data.

Perhaps no inference will ever be done on these data and it's not an issue for you. In that case, impute however you wish.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Scripting to interpolate missing time series weather data?

A lot of the comments seem to have ignored that you have time series data. Presuming your data exhibits autocorrelation you can use the JSL function INTERPOLATE. Or SPLINE SMOOTH if you need nonlinear interpolation.

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us