- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Prediction profiller and simulation

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Prediction profiller and simulation

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction profiller and simulation

The desirability functions and optimization are explained here.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction profiller and simulation

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction profiller and simulation

The general description is:

Optimization Algorithm

Optimization of the overall desirability function, or of the single desirability function if there is only one response, is conducted as follows.

-

For categorical factors, a coordinate exchange algorithm is used.

-

For continuous factors, a gradient descent algorithm is used.

-

In the presence of constraints or mixture factors, a Wolfe reduced-gradient approach is used.

A detailed description is omitted because JMP software is proprietary.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction profiller and simulation

I see. I try to understand the black box behind simulation because I faced a strange situation when I run simulation experiments a lot of times. (lets say doing run after run) JMP always suggests me different factor levels with smaller defect rates. The number of simulation runs I make is bigger, the better defect rate it suggests me. I don t understand these unstable optimization solutions. Why it does not suggest me global optimum factor levels, how many times do i rerun simulation to get global optimum?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction profiller and simulation

There are two primary reasons for such behavior. The first reason is that there is, in fact, more than one optimum solution. This often happens in simple systems but can happen in complex systems. too. The optimizer returns only one of the solutions in this case. It might find another if you change the starting position of all the factors.

The second reason is that the optimization by any numerical method depends on convergence criteria. The criteria determine when the current values are 'good enough.' Change the criteria and the solution will likely change.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction profiller and simulation

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction profiller and simulation

There are several ways to help achieve the optimum settings that you want or need.

Optimization Options: There are the criteria that I mentioned. You can access them from the red triangle menu when the desirability functions have been added to the profiler. Select Optimization and Desirability > Maximization Options. You will obtain a dialog in which you can change the criteria.

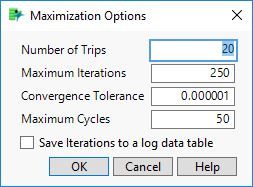

I unfortunately cannot find documentation for these options. Generally, though, increasing the Number of Trips, Maximum Iterations, or Maximum Cycles will make convergence more strict. Decreasing the Convergence Tolerance will also make the convergence more strict. I would recommend making small changes in the right direction.

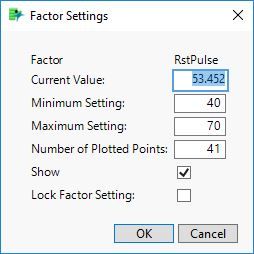

Lock Factor Settings: Sometimes it helps to select factor settings based on outside criteria. You can set and lock the setting. Control-click or Alt-click on the profile for the factor and then use this dialog:

Linear Constraints: You can define space to be excluded from the optimization using linear constraints. These represent inequalities and combinations of factor settings. See this help for more information.

Note that you can save the fitted model as a new column prediction formula. Then you can launch the Prediction Profiler on its own from Graph > Profiler without fitting the model again.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction profiller and simulation

i put number of tips=40,max iteration=250,max cycle=100 and cenvergence tolerans=0.00000001 but again it does not find best optimization solution.

And the worse thing is that, even if i rerun simulations, i could not reach the best defect rate %8 that JMP suggested me before after 3 times rerun simulations. But i am lucky that i have saved this solution before.

I think that JMP make simulations at initial factor levels. After i maximize desiribility, JMP takes new initial optimization points that JMP sugessted me at the fist step. If JMP do not start with the right starting point for optimization, i never finds the best solution.

(By the way i also makse simulation bu changing portion of factor level sapace to experiment with 1, but again i could not reach the best opt. solution.)

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us