- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Prediction formula null values

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Prediction formula null values

Hello,

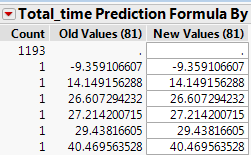

After saving my prediction formula from GenReg, I'm seeing about 80 percent of my dataset has null values (.) in the predicted values column. I suspect it has something to do with several "levels removed" in some of my significant predictors. Any advice on how to handle this or how to pivot from this in order to arrive at a higher yield (of predicted values) would be appreciated. Thank you :)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction formula null values

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction formula null values

@samalar,

Can you share a reproducible example - so people can try and step through your workflow ?

You don't have to share any confidential data - you can either anonymize your data or use the sample data sets in JMP if possible.

Uday

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction formula null values

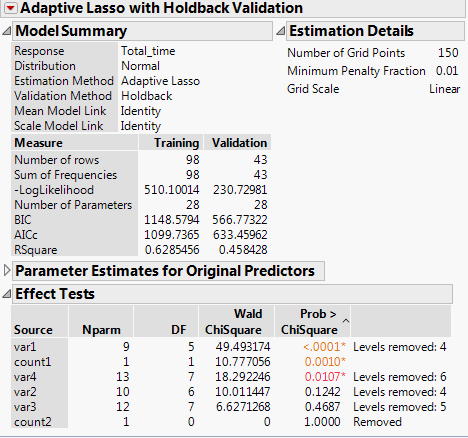

Thanks for taking a look. Genreg is modeling 6 predictors to estimate Total_time. Under Effect tests (see screenshot below), var1 is highly significant but 4 levels removed; count1 is fine because it is a continuous variable. Var2, Var3, Var4 have several levels removed. The screenshot of Prediction formula for Total_time shows that about 90% of calculated value is null. I understand that I will have to reconfigure the categorical variables. Can you help explain how to handle "levels removed"? Is this why the model applies to only 10% of the data?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Prediction formula null values

A categorical factor with k levels will require k-1 parameter estimates. You have many levels for each of your categorial variables. That translates into a model with many parameters to estimate. You don't have enough data to estimate your model. There are only 98 observations in the training set and 43 in the validation set. There is no way to validate your model since you don't observations with each level of all of those categories. JMP does its best to provide a fit to the data, but you need more data. You should rethink what model you wish to fit and the format of your data.

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us