- Learn how to build custom Python data connectors and further customize JMP’s Data Connector Framework with the Python Data Connector Demo, available now in the JMP Marketplace!

- See how to create experiments to support product design and ID useful product features. Register for June 12 webinar, 2pm US Eastern Time.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Can I check F 1 score in JMP

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Can I check F 1 score in JMP

Hi! I'm wondering if I can check F1 score in JMP pro when I'm comparing different models?

Also does JMP provides precision or recall value for the models?

Many thanks!!

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

This type of question can be directed to JMP Technical Support so we can get involved.

As for the question, JMP gives measures of Sensitivity, and 1-Specificity in the ROC Table when the "ROC Curve" menu item is selected, along with the True Positives, True Negatives, False Positives, and False Negatives. F1 is not specifically listed, but, from the description, it can be calculated with these measures.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

The F1 scores (and other popular measures of accuracy) have been deprecated in some corners of the Machine Learning community for their performance under bias. Please reference the attached file, which suggests the utility of Receiver Operating Curves and other measures.

JMP does include ROC (and Lift Curves) in some platforms.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

Hello @Oleg,

@Jenny wrote:

...didn't find precision and recall under model comparison. I'm wondering if JMP have these values.

JMP does not have these values natively in most platforms (as of the release of JMP 16.2), and as @Duane_Hayes answered in 2017, but they can be calculated from the output currently provided.

@Jenny wrote:

From my understanding, we sometimes may care more about precision or recall than misclassification rate in different situations, right?.

Yes this is my understanding.

To this end,

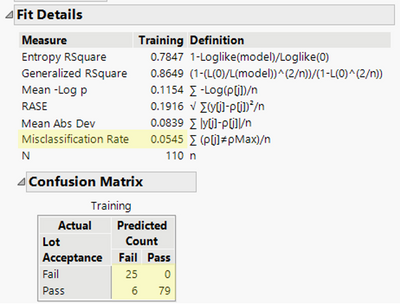

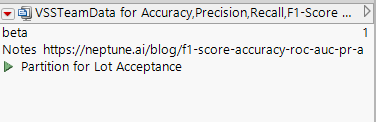

I am attaching an example with calculations in column formulas in JMP where Accuracy, F1-Score, Precision and Recall are calculated, using the formulas that I was readily able to find. Those for Precision and Recall are consistent with what @Jenny provided (also see p. 144 of "The StatQuest Illustrated Guide to Machine Learning!!!" by Josh Starmer, Ph.D, © 2022). Formulas for Accuracy and F1-Score can be found here among other places (Wikipedia): https://neptune.ai/blog/f1-score-accuracy-roc-auc-pr-auc. This example is from the VSSTeamData.jmp sample data file available as part of JMP's free online applied statistics course, STIPS. The calculations are consistent with what's reported by JMP in the Confusion Matrix output associated with the Partition model generated and saved herein as a saved data table script.

Click the green play button next to the script name to regenerate the model after opening the .jmp data table.

I hope this information is helpful! - Patrick Giuliano (@JMP Technical Support)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

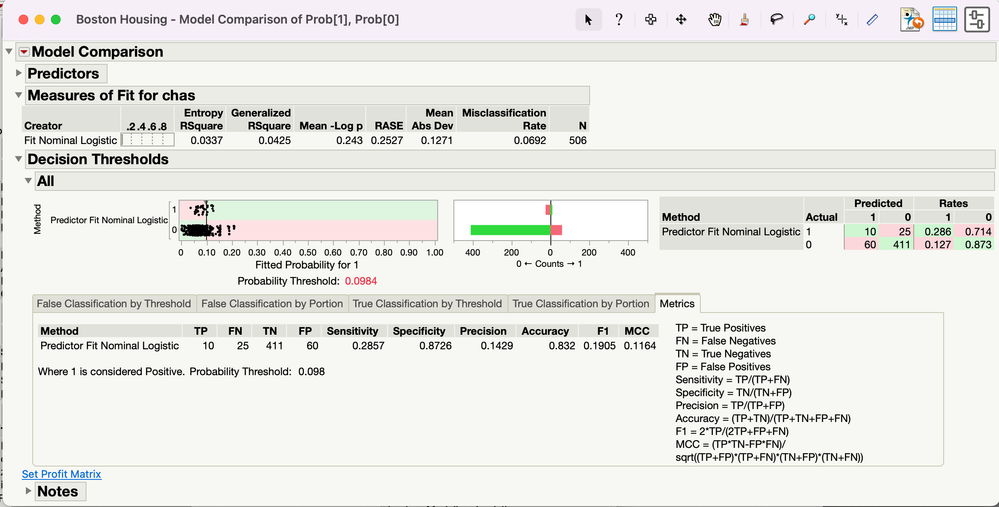

F1 is reported in the Decision Threshold section under Model Comparison. To use this, you must save your prediction formula to the data table, and then run Analyze > Predictive Modeling > Model Comparison. Then choose Decision Threshold under the little red triangle.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

I assume you have looked at the Model Comparison platform in JMP Pro. Unfortunately, I'm not familiar with the 'F1 score', so can you give further details or a link, please? Regarding you second question, are you thinking about predicted values, and the uncertainties in these?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

Hi! Thank you so much for your reply. F1 score is a measure of test accuray. Here's link of wilipedia on F1 score. https://en.wikipedia.org/wiki/F1_score

And for the second question, yes, I'm thinking about the predicted values.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

Hi Ian,

I am a bit surprised with your reply. It is not too much effort (especially, for a JMP expert) to web-search "F1 score", self-educate, and reply to the user with a meaningfull answer. Moreover, the inquirer provided additional info, but never received a meaningful support. That surprises too.

Would you or anyone else with the appropriate expertise help the JMP community with this question. F1 score is a key measure in logistic regression (or any model with nominal response) and imbalanced response levels (significantly more true negatives than true positives). Greatly appreciated. As noted, Wikipedia describes these concepts in details.

The Model Comparison link you provided is elaborate, but seems to be missing the F1 score computation. I'm sure this can be done by hand, but, if missing in JMP, should be strongly considered as a feature. Thanks in advance.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

A wise man once said that 'the only scarce commodity is time', and I think it's understood that the Community relies on crowdsourcing knowledge (from users and JMP staff alike), and could not operate effectively if it's reliant on a small group.

In addition to the Commmunity, there is also the official support channel, which anyone who licenses a SAS product may use. One key difference is that there are service level agreements for response times, and internal processes for escalation to achieve satisfactory resolution. This is definitely the best mechanism for surfacing suspected bugs, but also for making new feature requests. In addition, 'How do I?' questions, or 'I'm used to software X but can't do the same thing in JMP' questions will also be answered, but within this more rigorous and predictable framework.

So, for bugs and features, or if the Community appears unresponsive, I would certainly consider this channel too. And, stating the obvious perhaps, it doesn't have to be 'one or the other'. Many Community threads have lead to support tracks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

This type of question can be directed to JMP Technical Support so we can get involved.

As for the question, JMP gives measures of Sensitivity, and 1-Specificity in the ROC Table when the "ROC Curve" menu item is selected, along with the True Positives, True Negatives, False Positives, and False Negatives. F1 is not specifically listed, but, from the description, it can be calculated with these measures.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

Hi Ian, you asked for a formula. Jenny provided one. Still no solution, yet the discussion was viewed 500+ times. So, the question has weight.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

The F1 scores (and other popular measures of accuracy) have been deprecated in some corners of the Machine Learning community for their performance under bias. Please reference the attached file, which suggests the utility of Receiver Operating Curves and other measures.

JMP does include ROC (and Lift Curves) in some platforms.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

Can anyone provide a clear and succinct answer to Jenny's answer, instead of dumping an opinion in this discussion? This is not a blog. Question asked, question answered. Thanks in advance.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Can I check F 1 score in JMP

Hello @Oleg,

@Jenny wrote:

...didn't find precision and recall under model comparison. I'm wondering if JMP have these values.

JMP does not have these values natively in most platforms (as of the release of JMP 16.2), and as @Duane_Hayes answered in 2017, but they can be calculated from the output currently provided.

@Jenny wrote:

From my understanding, we sometimes may care more about precision or recall than misclassification rate in different situations, right?.

Yes this is my understanding.

To this end,

I am attaching an example with calculations in column formulas in JMP where Accuracy, F1-Score, Precision and Recall are calculated, using the formulas that I was readily able to find. Those for Precision and Recall are consistent with what @Jenny provided (also see p. 144 of "The StatQuest Illustrated Guide to Machine Learning!!!" by Josh Starmer, Ph.D, © 2022). Formulas for Accuracy and F1-Score can be found here among other places (Wikipedia): https://neptune.ai/blog/f1-score-accuracy-roc-auc-pr-auc. This example is from the VSSTeamData.jmp sample data file available as part of JMP's free online applied statistics course, STIPS. The calculations are consistent with what's reported by JMP in the Confusion Matrix output associated with the Partition model generated and saved herein as a saved data table script.

Click the green play button next to the script name to regenerate the model after opening the .jmp data table.

I hope this information is helpful! - Patrick Giuliano (@JMP Technical Support)

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us