- Learn how to build custom Python data connectors and further customize JMP’s Data Connector Framework with the Python Data Connector Demo, available now in the JMP Marketplace!

- See how to create experiments to support product design and ID useful product features. Register for June 12 webinar, 2pm US Eastern Time.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Re: Auto-correlation in multi-linear regression model with time-dependent variab...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Auto-correlation in multi-linear regression model with time-dependent variable

Hi all,

I'm trying to generate a multi-linear regression model on a study that spanned many weeks. One of the factors is cell age and this does seem to have a significant effect on the response. When looking at Residuals vs Row plot and performing Durbin-Watson test, it looks like the data are not independent (i.e. auto-correlation exists). However, since cell age is a time-dependent factor, wouldn't auto-correlation be expected? In this case, would I still need to correct for auto-correlation somehow and are their options on JMP for doing so?

Thank you!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Auto-correlation in multi-linear regression model with time-dependent variable

Just something to double-check: the Durbin-Watson option displays the test-statistic. The option to display the p-value is then on the associated red triangle (not a direct answer to your question, just an observation that it's easy to misinterpet the test).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Auto-correlation in multi-linear regression model with time-dependent variable

Yes! The test statistic for correlation is about 0.4 and p-value < 0.001.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Auto-correlation in multi-linear regression model with time-dependent variable

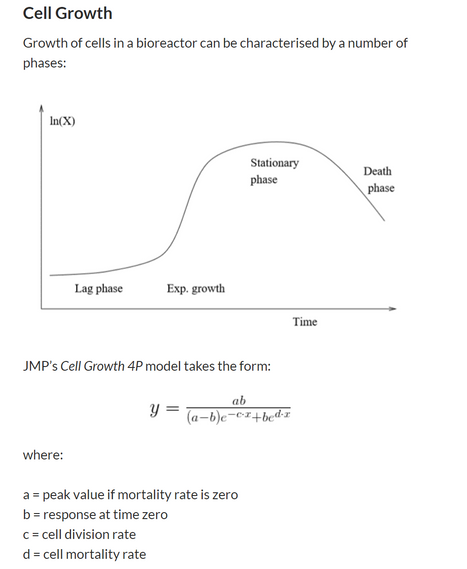

Sorry to give another response that doesn't answer your question - just another observation - you can use the 'Fit Curve' platform to fit a nonlinear cell growth model.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Auto-correlation in multi-linear regression model with time-dependent variable

Hi @David_Burnham, thank you for your responses! I actually used the Cell Growth 4P model in the past (for a different purpose) and it did work very well! However, what I'm trying to achieve is quite different. I'm providing more information below.

For our current study, we're looking at different factors that might impact the productivity of our cell line. There are several factors, both categorical and continuous. Categorical factors include media types, batches etc while continuous factors include the number of times the cells have been subcultured (what I referred to as "cell age" above) and culture conditions. The response is how much protein of interest the cells produce per, say, a 1-week process run. So let's say, at the beginning of week 1, we transfer the cells into a flask to start a process and at the end of week 1, we measure how much protein the cells produce. That would be one data point. We would then repeat this for many weeks to gather more data points. Now, if it turns out that the cells produce less and less protein with subsequent runs, then cell age would be a statistically significant factor in my final model. My question is, wouldn't this cause auto-correlation? And if so, should I attempt to correct for it or just accept that it's inherent to the study?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Auto-correlation in multi-linear regression model with time-dependent variable

I was hoping that we might have had some other contributions to this discussion, but I guess the forum is quiet due to July 4.

The general implication of autocorrelation is that standard errors tend to be under-estimated which has consequences for statistical inference.

One approach is to use a regression model that uses an autoregressive representation of the errors (i.e. a time-series model for the errors that accounts for the autocorrelation).

Here is a useful discussion:

https://online.stat.psu.edu/stat462/node/189/

The good news is that at the bottom of the article they provide an actual example, including data, the calculations and the results. The worked example is based on the Cochrane-Orcutt procedure.

I was able to work through the procedure using JMP and at each step the results agreed.

You might want to try for yourself with their example. One thing you will need to do is construct a column formula for a simple autoregressive model of the residuals - something you can do if you are familiar with the lag function in JMP.

If you think this procedure might be beneficial, but get stuck with it, let me know and I can make a video recording of the sequence.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Auto-correlation in multi-linear regression model with time-dependent variable

Hi David,

Thank you so much for your answer and explanation. That link you sent looks to be an excellent resource for MLR in general. I'll definitely give it a try with my data.

Thanks again!

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us