- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

Uncharted

- JMP User Community

- :

- Blogs

- :

- Uncharted

- :

- Least Popular Day of the Week

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Caturday is not the least popular day of the week.

Almost a year ago I started collecting trending word data from Twitter. Not with a real goal in mind, other than making an artistic video. Except for a few power failures, the data is pretty complete, sampling ten places every five minutes.

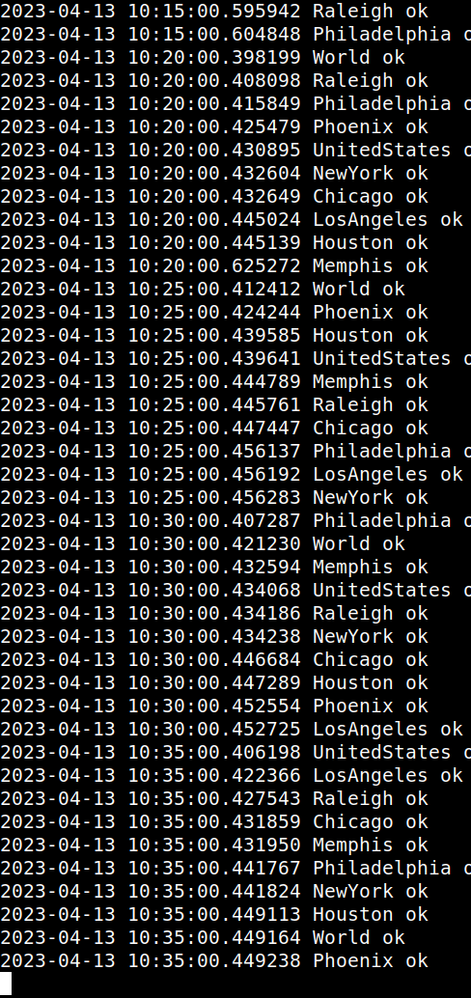

A typical result from the Twitter API looks like this:

["2022-10-06 21:20:00.366761", "#SheHulk", "Matt Ryan", "Colts", "Colts", "Mario", "Hines", "#TNFonPrime", "Bowser", "#ThursdayNightFootball", "Russell Wilson", "#FanDuelisTheGREATEST", "#INDvsDEN", "Judy Tenuta", "Quavo", "Graphic B", "Hunter Biden", "Saweetie", "Matty Ice", "Luigi", "Phillip Lindsay", "Caden Sterns", "Jovic", "Charles Martinet", "Marijuana", "Offset", "Kathy", "Dank Brandon", "Carina", "Pittman", "Sasse", "Brooklyn", "Commanders vs Bears", "Frank Reich", "Primetime", "Elvis", "Mushroom Kingdom", "Jamal Cain", "Toad", "Sara Lee", "Sam Ehlinger", "Darren Bailey", "Norville", "Deon Jackson", "Rodney Thomas", "Ryan Kelly", "Mumbles McTouchdown", "Melvin Gordon", "Ranboo", "Illumination", ""]

Usually there will be 50 trending terms for each location. The nine locations in the US have a lot of words in common, and trends last for a number of five minute periods. Even a tiny trend will get a lot of counts. Here's the JSL I'm using to load the files and make a table of words and frequencies.

// for this quick test I copied the json files to my desktop.

// probably should put them in their own directory...

dir = "$desktop/";

names = Filter Each( {j}, Files In Directory( dir ), Ends With( j, ".json" ) );

// I'll need a list of open data tables

dtfiles = {};

For Each( {file}, names, Insert Into( dtfiles, Open( dir || file, JSON Settings( Col( "/root", Type( "JSON Lines" ) ) ) ) ) );

// I'll use an associative array to gather the frequency info

WordToFrequency = [=> 0];

For Each( {dt}, dtfiles,

For Each Row( dt,

// there are a lot of ways to do this; here it makes a list of col2..col51 from the current row

row = dt[Row(), 2 :: N Cols( dt )];

// then process the list

For Each( {word}, row, WordToFrequency[word] += 1 );

)

);

// then make a data table from the associative array

dtWF = New Table( "wordfreq",

addrows( N Items( WordToFrequency ) ),

New Column( "word", character, values( WordToFrequency << getkeys ) ),

New Column( "freq", numeric, values( WordToFrequency << getvalues ) )

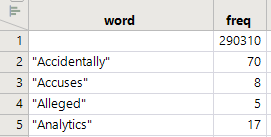

);The table is sorted by word:

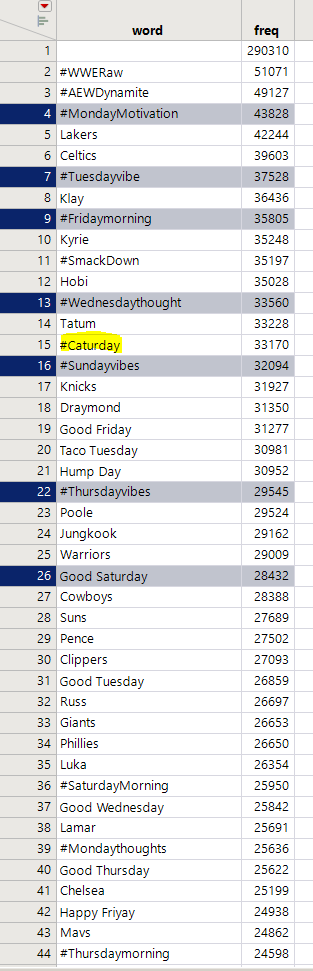

The first interesting question is "what is the trendiest word?" Sort by freq:

There it is. Saturday is the least popular day of the week.

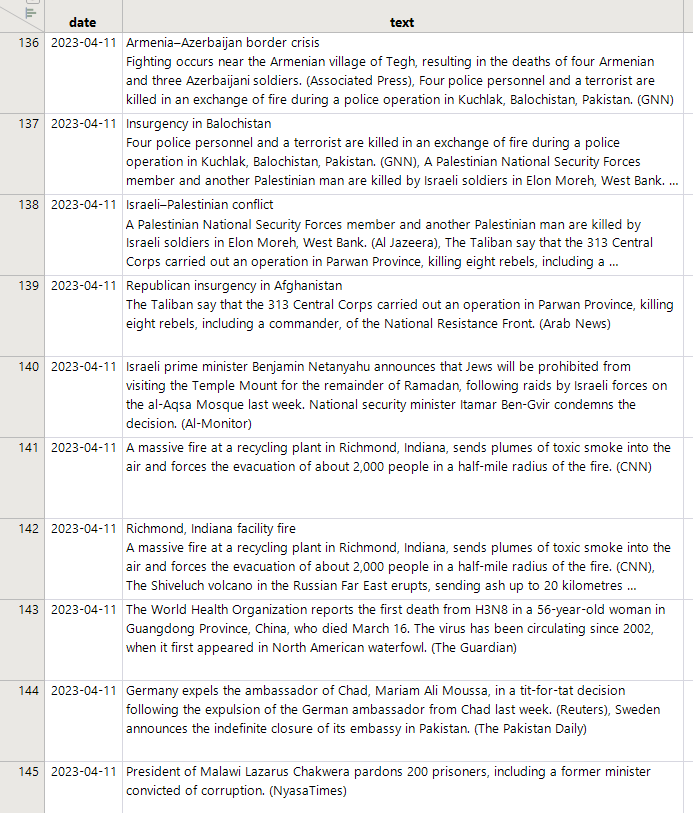

As mentioned in WebSocket , I made a boring visualization of this data earlier. The data is by city (or US or World) and has a timestamp. I've got a separate program that scrapes news items from Wikipedia which might help. Maybe not; twitter trending words seem loosely (at best) connected to actual news.

(Caution when using this script: it is abusing the similarity between html and xml to turn html into something that JMP's xml reader can process. You'll need to figure out why any particular html does not look enough like xml and repair it before using this idea. See the regex around line 10--it is deleting the html bits that mess up the xml reader. Using python and beautiful soup might be easier, but I've not done that.)

dtBig = New Table( "news",

New Column( "date", Numeric, "Continuous", Format( "yyyy-mm-dd", 12 ), Input Format( "yyyy-mm-dd" ) ),

New Column( "text", Character, "Unstructured Text" )

);

For( ddd = 1apr2023, ddd < Today(), ddd += In Days( 1 ),

url = "https://en.wikipedia.org/wiki/Portal:Current_events/" || Format( ddd, "Format Pattern", "<YYYY>_<Month>_<D>" );

txt = Load Text File( url );

Length( txt );

xml = Regex(

txt,

"<!DOCTYPE html>|<!doctype html>| |(<script.*?</script>)|(<form.*?</form>)|(<option.*?</option>)|crossorigin|data-mw-ve-target-container|<link.*?>|<meta.*?>|<input.*?>|<img.*?>",

"",

GLOBALREPLACE

);

dt = Open(

Char To Blob( xml ),

XML Settings(

Stack( 0 ),

Row( "/html/body/div/div/div/main/div/div/div/div/div/div/ul" ),

Row( "/html/body/div/div/div/main/div/div/div/div/div/div/ul/li/ul" ),

Row( "/html/body/div/div/div/main/div/div/div/div/div/div/ul/li/ul/li/ul" ),

Row( "/html/body/div/div/div/main/div/div/div/div/div/div/ul/li/ul/li/ul/li/ul" ),

Row( "/html/body/div/div/div/main/div/div/div/div/div/div/ul/li/ul/li/ul/li/ul/li/ul" ),

Col(

"/html/body/div/div/div/main/div/div/div/div/div/div/ul/li",

Column Name( "text" ),

Fill( "Use Once" ),

Type( "Original Text" ),

Format( {"Best"} ),

Modeling Type( "Continuous" )

),

Col(

"/html/body/div/div/div/main/div/div/div/div/div/div/ul/li/ul/li",

Column Name( "text" ),

Fill( "Use Once" ),

Type( "Original Text" ),

Format( {"Best"} ),

Modeling Type( "Continuous" )

),

Col(

"/html/body/div/div/div/main/div/div/div/div/div/div/ul/li/ul/li/ul/li",

Column Name( "text" ),

Fill( "Use Once" ),

Type( "Original Text" ),

Format( {"Best"} ),

Modeling Type( "Continuous" )

),

Col(

"/html/body/div/div/div/main/div/div/div/div/div/div/ul/li/ul/li/ul/li/ul/li",

Column Name( "text" ),

Fill( "Use Once" ),

Type( "Original Text" ),

Format( {"Best"} ),

Modeling Type( "Continuous" )

),

Col(

"/html/body/div/div/div/main/div/div/div/div/div/div/ul/li/ul/li/ul/li/ul/li/ul/li",

Column Name( "text" ),

Fill( "Use Once" ),

Type( "Original Text" ),

Format( {"Best"} ),

Modeling Type( "Continuous" )

),

),

XML Wizard( 0 )

);

For Each Row( dt, dt:text = Regex( dt:text, "<[^>]+>", "", GLOBALREPLACE ) );

dt << New Column( "date", Numeric, "Continuous", Format( "yyyy-mm-dd", 12 ), Input Format( "yyyy-mm-dd" ), <<seteachvalue( ddd ) );

dtBig << Concatenate( dt, Append to first table );

Close( dt, nosave );

Wait( 1 );

);

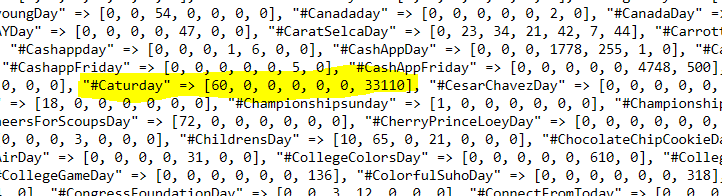

OK, I researched Caturday a bit more. It may be linked to Saturday. Let's do an analysis. Try collecting all the trend words that end in ...day and remember the day it happens...

days = [=> ];

For Each( {dt}, dtfiles,

(dt:col) << datatype( "numeric" );

For Each Row( dt,

row = Filter Each( {trend}, dt[Row(), 2 :: N Cols( dt )], (Ends With( Lowercase( trend ), "day" )) );

dow = Day Of Week( dt:col );

For Each( {trend}, row,

If( !Contains( days, trend ),

days[trend] = [0, 0, 0, 0, 0, 0, 0]

);

days[trend][dow] += 1;

);

);

);Wow. There's a bunch (1568). It looks like caturday and saturday are strongly linked. Maybe Thursday is the actual least popular.

The spill-over into Sunday is likely a time zone thing.

Welcoming any ideas...thanks for reading.

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.