Screening designs are popular for investigating the behavior of new systems or processes because they make efficient use of the limited resources available. Factors in screening experiments are often limited to two levels to economize on the number of runs that are necessary to fit a model that estimates the linear effect of each factor.

Before designing a screening experiment, it is customary for the team of stakeholders to conduct a brainstorming session to identify all the factors that might potentially affect the responses of interest. It is not unusual for such sessions to produce two dozen or even more potential factors. Here, in my view, a strange thing happens. The experiment that is designed and performed only employs a half a dozen to a dozen of the potential factors. The remaining factors are somehow eliminated without the benefit of data!

Why is this, I hear you ask…

Standard screening designs must have at least as many runs as factors. If the budget for the experiment allows for 16 runs, then many experimenters would feel uncomfortable varying as many as a dozen factors. The maximum possible number of factors allowable in a standard screening design having 16 runs is 15. However, with 15 factors and 16 runs, there are no degrees of freedom left to estimate the error variance. Such a design is called saturated because all the runs are being used to estimate factor effects. Many practicing experimentalists feel that saturated experiments are risky.

Must experiments have more runs than factors?

If saturated experiments are risky, then it seems like experiments with more factors than runs, (that is, supersaturated experiments) would be even more risky. This risk would be real and crippling if all the factors had a substantial effect on some response. Experience with many actual experiments shows that it is rare that even half of the factors in a screening experiment are active (meaning they have practically important effects). In fact, the Pareto Principle (or 80/20 rule) posits that 80 percent of important effects are due to 20 percent of the potential causes. The problem is that before running an experiment, you don’t know which vital few factors are going to turn out to be important.

The key insight here is that you don’t need to be able to estimate the effects of all the factors to identify the few that matter.

Based on this insight, I added a feature to the Custom Design tool in JMP to create supersaturated designs (SSDs) several years ago. By selecting all the factor effects in the model and changing their Estimability from the default setting of “Necessary” to “If Possible,” you can reduce the number of required runs in a Custom Design to less than the number of factors. Based on the very few technical support calls received about this feature, it is seldom used.

Why are they seldom used?

The analysis and interpretation of the results of these designs requires substantial expertise. There is no Easy Button. The lack of a way to reliably separate the signal from the noise is problematic. Therefore, the perceived risk of using supersaturated designs is understandably still high. I have been looking for a way to lower that risk for many years.

You must have found something good or you would not be writing this blog post. What is it?

A group of co-authors and I recently developed a procedure that allows an SSD to estimate the error variance independently of the effect of any factor. In other words, we found a way to separate the signal from the noise.

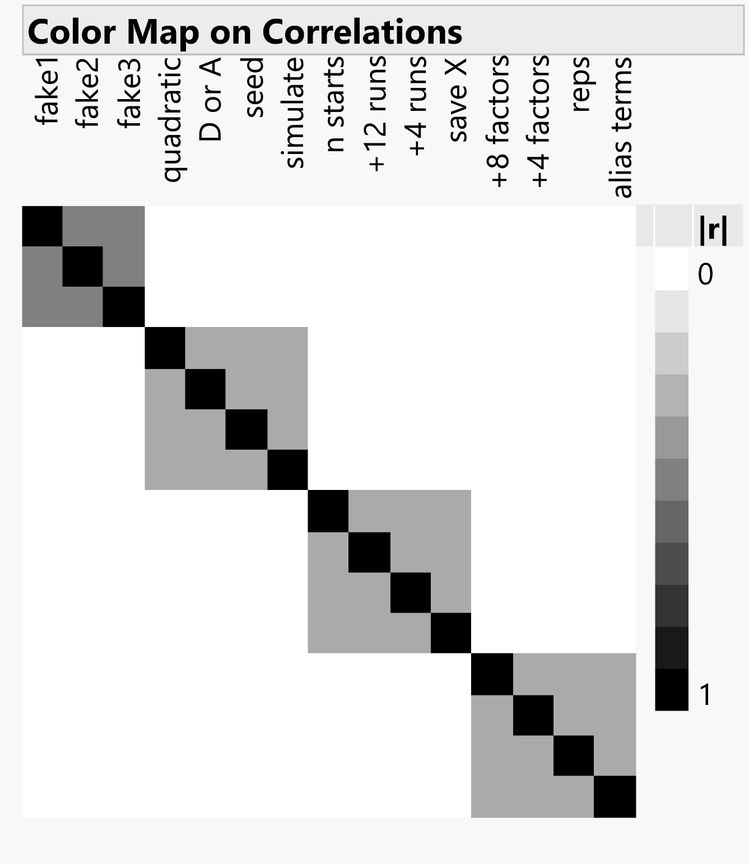

Our procedure for constructing an SSD separates the design factors into groups. The factors in the same group are correlated but factors in separate groups are orthogonal to each other. The correlation cell plot in the figure provides a quick visualization of this fact.

Note that the first group has three factors labeled fake1 to fake3. There is actual a fourth “factor” in this group – the intercept column. Instead of assigning real factors to the columns in the first group, we can use these “fake factor” columns to estimate the error variance. Since these columns are orthogonal to all the rest of the columns, this error variance estimate is independent of any factor effect.

The design construction is embarrassingly simple.

Step 1: Start with two Hadamard matrices. JMP 15 provides a JSL function that can supply all but three Hadamard matrices up to the 1,000 by 1,000 matrix.

Example: The scripting command Hadamard(4) yields the matrix below.

[ 1 1 1 1,

1 -1 1 -1,

1 1 -1 -1,

1 -1 -1 1 ]

Step 2: Remove some rows (but not as many as half the rows) from the second matrix.

Step 3: Take the Kronecker product of the two matrices, and you have a GO SSD.

Direct Product is the JSL function for generating the Kronecker product of two matrices.

Of course, you don’t have to do this. JMP 15 provides this functionality in the menu:

DOE> Special Purpose> Group Orthogonal Supersaturated> Group Orthogonal Supersaturated Design

You either supply the number of factors you have or the number of runs in your budget, and the designer will show you all your choices.

JMP 15 also provides a built-in automated analysis in the menu right below the designer or as a script in a data table created from the GO SSD platform.

DOE> Special Purpose> Group Orthogonal Supersaturated> Fit Group Orthogonal Supersaturated

The analysis proceeds by first finding the active groups. The degrees of freedom from inactive groups can be pooled to give a more precise estimate of the error variance.

For active groups, the active factors can then be identified. If there are too many active factors in a group, JMP will tell you which factors need to be augmented in a small follow-up experiment.

If you need to screen many factors and runs are expensive in either money or time, GO SSDs are the tool for you. Finally, there is an Easy Button for supersaturated design.

Reference

Jones, Bradley; Lekivetz, Ryan; Majumdar, Dibyen; Nachtsheim, Christopher J. and Stallrich, Jonathan W. “Construction, Properties, and Analysis of Group-Orthogonal Supersaturated Designs.” Technometrics, August 2019.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.