- Browse apps to extend the software in the new JMP Marketplace

- This add-in is now available on the Marketplace. Please find updated versions on its app page

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

JMP Add-Ins

Download and share JMP add-ins- JMP User Community

- :

- File Exchange

- :

- JMP Add-Ins

- :

- Calibration Curves

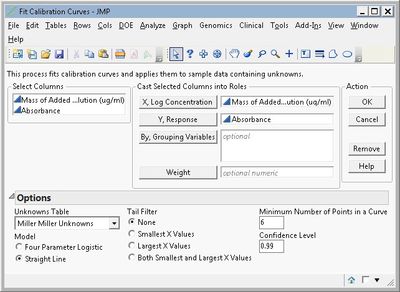

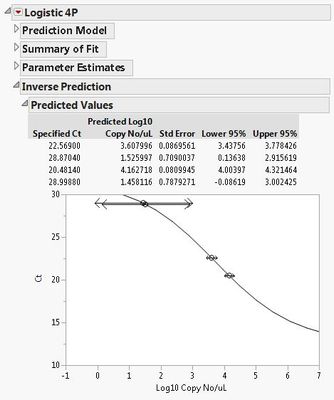

This add-in helps you fit one or more calibration curves with either a four-parameter logistic or straight-line model and then use the curves to compute inverse predictions on a set of unknowns. The main data and the unknowns data must be stored in separate JMP tables. The inverse predictions and their confidence limits are copied back into the unknowns table in new columns.

The input data sets must be in stacked format, that is, with one row per individual response. One column must contain the log concentrations (the X values) and another must contain the responses (the Y values). If you are fitting multiple curves, one or more columns must identify the “By” groups of data, and identical columns must be in the standards and unknowns tables. You can optionally specify a weighting variable.

You can optionally perform tail filtering on the data. This approach successively removes points from the tails of the data and refits the model, tracking the R-Squared statistic for each fit. The model with the largest R-Squared is retained for final analysis and inverse predictions.

To install the add-in, download Calibration Curves.jmpaddin, drag it onto an open JMP window, and click "Install". Two examples (each with two data sets) are also available for download. Some screenshots are below. For more details, click Add-Ins > Calibration Curves > Help after installing the add-in.

I got this error "Send Expects Scriptable Object in access or evaluation of 'Send' , nw << SetWindowIcon( "Bivariate" )" under JMP 10.0.2. Any ideas why? Thanks.

Hello,

where can I locate the Calibration Curves.jmpaddin? Thank you

Have you maybe uploaded the wrong file?

That looks like binding curves and not calibration curves

Apologies! Correct file is now uploaded.

Thanks this is very helpful. I miss the standard error on the inverse prediction (the sx0) for the single observation of y in the table. I can calculate back from the CI_radius for each row but would be nice if it was include in the table.

For repeated observations sx0 depends on the number of observations of independent samples, and it would be nice if the inverse prediction included that the stated Y value was an average of let's say 3 independent values.

/Flemming

Just noticed that Fit Model has an inbuilt Inverse prediction, which works for a single value, with a more suitable CI, but again a feature for repeated measures of Y would be nice.

/Flemming

Hi @russ_wolfinger. This add-in is just what I need for my data. The only problem is it only calculates the first 8 unknowns and leaves the rest blank. How can I fix that?

Hi @ASTA , The add-in somewhat clumsily can only handle 8 unknown rows. To predict more, create multiple unknown tables, each with no more than 8 rows, and run the add-in separately for each. Optionally then concatenate the separate tables to get one final table.

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us