- JMP User Community

- :

- Discussions

- :

- Re: Understanding Parallel Assign

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Understanding Parallel Assign

I'm trying to understand parallel assign to try and speed up some small operations. I run the following script (warning it ran out of memory for me and locked me up) and I'm seeing it get exponentially slower with the number of rows in a vector.

for(i=1, i<=5, i++,

t = [59760, 71220, 79380, 83820, 3300, 11400, 27900, 39060, 43500, 51300,56220, 60];

t = repeat(t, 10^i);

n = nitems(t);

diff = t[2::n]-t[1::(n-1)];

day_v = diff < 0;

st = HPTime();

day_cum = cumulative Sum([0]|/day_v);

tot_cum = HPTIme() - st;

st= HPTime();

day_break = loc(day_v); // this will give you last row of the day

// make a matrix n x number of day breaks

m = J(n, nitems(day_break), -1);

parallel assign({db = day_break},

// make the item a 1 if it's row is higher than the corresponding row

m[a, b] = a > db[b];

);

m;

// now just sum the matrix

day = VSum(m`); // +1; // if you want the day to start at 1

tot_pa = HPTime() - st;

show(n, tot_pa, tot_cum);

);

I don't expect it to be faster to do the parallel assign than the cumulativesum() but I'm curious on how the parallel assign is working.

I've read this and I'm guessing it's because it's creating copies of very large matrices for each thread?

Does anyone have any other insights into how this might operate and maybe some basic dos and don'ts for parallel assign?

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Understanding Parallel Assign

Parallel Assign is doing an amazing job! I think the size of the array m is the actual issue: it is growing by leaps and bounds, or 100X on each iteration.

| i | nitems(m) | Seconds_in_Parallel_Assign | Seconds_in_Nested_Loops |

| 1 | 2,400 | too small for tickseconds() | .18 |

| 2 | 240,000 | .033 | 19 |

| 3 | 24,000,000 | 3.3 | 1951 |

| 4 | 2,400,000,000 | 338 | gave up waiting |

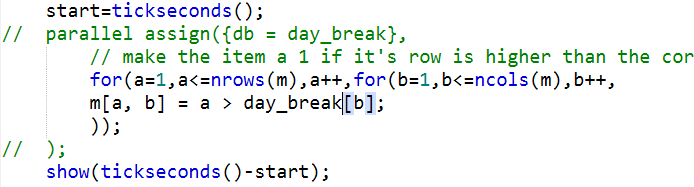

That script,

m[a, b] = a > db[b];

is executed about 7 million times per second (4-CPU 17.5G VirtualBox). I also ran out of memory, and that means paging. Paging will cause a dramatic slow-down, perhaps at i==5.

Column 4, the nested-loop comparison, was made like this (screen capture...computer busy trying to get to 1900 seconds on row 3):

This is the kind of problem parallel assign was designed for. Part of the speed comes from C++ loops rather than JSL loops, and part from threading.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Understanding Parallel Assign

I'm not fully sure how Parallel Assign split the tasks and how it copies the matrices. but in general multithreading and parallel computing usually only benefits if the operation to do is more effort than the overhead to split tasks and join the subcalculations results. You asked for dos and don't's and I believe the same thing as for parallel compunting and multithreading applies here:

Multithreading is a bad idea when the problem you are trying to solve is not parallelizable, or when the problem you are trying to compute is so small, that spawning threads will cost more time than computing in a single thread.

If your problem can be broken into parts that can run in parallel, it might be a good idea to do so, but it depends on how much can run in parallel and how easy it is to join the sub problem results. If your algorithm would require multiple joins (to one thread) to break up into new sub problems on new threads, it have to be very computation intensive to be worth the effort to parallelise, if your result, on the other hand, just is a list of the sub problem results joined once, then there is potential.

Of course if you have iterative operations or tasks that depend on the previous task usually you do not gain benefit, more over you block threads with fast tasks sitting there and waiting for the other threads to end to be able to join back the results and use them further.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Understanding Parallel Assign

Parallel Assign is doing an amazing job! I think the size of the array m is the actual issue: it is growing by leaps and bounds, or 100X on each iteration.

| i | nitems(m) | Seconds_in_Parallel_Assign | Seconds_in_Nested_Loops |

| 1 | 2,400 | too small for tickseconds() | .18 |

| 2 | 240,000 | .033 | 19 |

| 3 | 24,000,000 | 3.3 | 1951 |

| 4 | 2,400,000,000 | 338 | gave up waiting |

That script,

m[a, b] = a > db[b];

is executed about 7 million times per second (4-CPU 17.5G VirtualBox). I also ran out of memory, and that means paging. Paging will cause a dramatic slow-down, perhaps at i==5.

Column 4, the nested-loop comparison, was made like this (screen capture...computer busy trying to get to 1900 seconds on row 3):

This is the kind of problem parallel assign was designed for. Part of the speed comes from C++ loops rather than JSL loops, and part from threading.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Understanding Parallel Assign

Good point, I didn't think about my example not being an apples to apples comparison. Thanks @Craige_Hales , this is really useful.

- © 2024 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- About JMP

- JMP Software

- JMP User Community

- Contact