- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Tips for making a large data table smaller/lighter/faster

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Tips for making a large data table smaller/lighter/faster

Does anyone have any tips for making a table faster? I'm working with about 300M rows and I'm just wondering if there are any tips for making it not have to work as hard.

For instance, I tried turning a few condition columns (valued 1, 2, 3, 4,...) from character to numeric thinking that ints would be smaller than chars and it actually made the table slower and bigger. Are some data/modeling types intrinsically smaller than others? Or really anything anyone has found that might make working with this thing easier.

I'm currently trying to bin my data columns with int values of 0-255 and just have a frequency column. But if I need the full resolution data, is there anything I can do?

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Tips for making a large data table smaller/lighter/faster

Try Cols->Utilities->Compress Selected Columns. It will look at the data stored in the selected columns and see if there's a more compact format to store it in.

From JMP Table & Column Compression:

Here's what Compress Selected Columns does:

- It adds a List Check (default order) to character column if the column has less than 255 distinct values.

- Change numeric columns to the smallest 1-byte, 2-byte, or 4-byte integer if all values in the column can be stored. Only integer values columns are checked.

- For 1-byte integer, the range of numbers that you can store is from -126 to 127.

- For 2-byte integer, the range of numbers that you can store is from -32,766 to 32,767.

- For 4-byte integer, the range of numbers that you can store is from -2,147,483,646 to 2,147,483,647.

- It will not change the columns if they have list check already.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Tips for making a large data table smaller/lighter/faster

Try Cols->Utilities->Compress Selected Columns. It will look at the data stored in the selected columns and see if there's a more compact format to store it in.

From JMP Table & Column Compression:

Here's what Compress Selected Columns does:

- It adds a List Check (default order) to character column if the column has less than 255 distinct values.

- Change numeric columns to the smallest 1-byte, 2-byte, or 4-byte integer if all values in the column can be stored. Only integer values columns are checked.

- For 1-byte integer, the range of numbers that you can store is from -126 to 127.

- For 2-byte integer, the range of numbers that you can store is from -32,766 to 32,767.

- For 4-byte integer, the range of numbers that you can store is from -2,147,483,646 to 2,147,483,647.

- It will not change the columns if they have list check already.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Tips for making a large data table smaller/lighter/faster

Well that makes everything easy. Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Tips for making a large data table smaller/lighter/faster

jeff.perkinson's strategy is the right one to use. The key is to get the byte size correct - which the "compress selected columns" does for you. You can also reduce file size on the disk by using the "Compress File on Save" feature under the upper left most red triangle in the scripts area of the data table. A third option that I like for working with text based categories is this add-in: https://community.jmp.com/docs/DOC-6111

best,

M

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Tips for making a large data table smaller/lighter/faster

Vince,

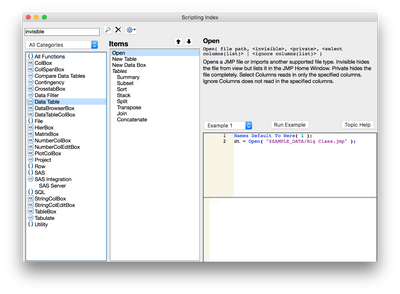

I'm going to leverage the fact that I've seen some posts where you are scripting. If you're already familiar with the types of data present and have some ideas of the reports/analysis you wish to perform on the larger dataset, why not try the invisible option on open? If you open a data table invisible (and keep it invisible) then that will save the I/O to the display.

Community Discussion:

https://community.jmp.com/thread/59784

Support Document:

http://www.jmp.com/support/help/Basic_Data_Table_Scripting.shtml#Invisible

Scripting Guide:

Regards,

Nate

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Tips for making a large data table smaller/lighter/faster

Nate & Vince ,

I have tried that out on a large data table previously.Apart from making a data table invisible - one can also try to make the data table private for further optimization ( again there can be trade offs)

1. Invisible

2. Private

The following webcast by Brady ( a small part of it ) shows the same too .

JMP Scripting Language for Experienced JSL Users – Part 1 | JMP

Uday

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us