- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Simulating data to generate larger data set for building models

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Simulating data to generate larger data set for building models

Dear JMP community,

I'm trying to work out a way to generate simulated data to better train a model I'm building.

I have a decent sized data set -- a few thousand data points, but would like to generate simulated data that maintains a similar structure as the original data in order to improve upon the model. I'd like to have somewhere around 20K-50K data points with a similar structure in order to have a larger set to train, validate, and test the prediction model.

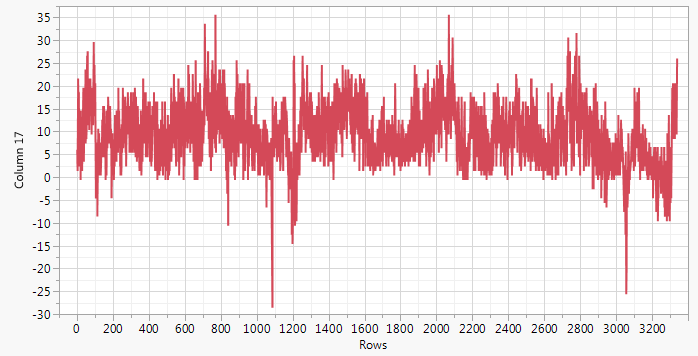

Just for visual purposes, the data looks something like this:

I've tried to work with the "Simulator" feature from the Profiler platform, but the data that is generated there doesn't keep a similar structure (variation) as the original data -- it gets too "washed out" with the standard deviations from the inputs and response. When I try to build a model off this set and compare it to the original model from the source data, the orginal model outscores it because that model maintains the structure of the data in it's prediction. The model generated from the simulated data set doesn't since all structure is essentially lost.

Also, the "Simulate" feature (right clicking a column in a report window) doesn't really get me where I want either.

I've done a bit of research into what people have done with JMP and found a few potential leads, however so far none of them really go after what I'm looking for. If anyone out there has a solution to this, or can point me in the right direction on how to code for it, I would be grateful.

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Simulating data to generate larger data set for building models

If you have JMP Pro version 14.2 your problem sounds like a perfect application for the Functional Data Explorer. Another option might be time series modeling approach using some kind of ARIMA construct perhaps? No need for JMP Pro for the time series approach.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Simulating data to generate larger data set for building models

Hi Peter,

Thanks for the comments. I forgot to add in the original post that I am running JMP Pro 14.1.0.

I might have to wait until the next update roll-out, but I can at least do some reading and follow-up.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Simulating data to generate larger data set for building models

First of all, how would you use the simulated data to improve the model? Is it to have sufficient data to demonstrate the feasibility of a particular modeling approach? Is it to assess the sample size that might be sufficient for a particular model? Is just a matter of increasing the sample size?

Second of all, it seems like you have a single time series. Is that interpretation correct?

Third of all, the simulation feature of the Profiler is meant to work with a fitted or saved model. You can describe the variation in the predictors and in the responses in terms of parametric distribution models. I don't know if that mechanism works with time series models. (Other experts can chime in here.)

The platform simulation is intended to resample data based on a save model with a stochastic component to generate the empirical sampling distribution of the chosen statistic in a platform.

I think that I can get a SAS program to simulate time series data that could be adapted to a JMP column formula or script. (No promises.)

Am I on the right track?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Simulating data to generate larger data set for building models

Hi Mark,

Thanks for your comments.

The data generated is very slow -- meaning it takes a long time to actually get the original data, days & weeks. So, building a data set that is 50K in size is not feasible. I am using some of the Pro (running Pro 14.1.0) modeling functions to try and generate a model that not only predicts well the data, but also captures the structure within the data. Having a larger data set that mimics the original structure would be very helpful in improving the model. Increasing the sample size (including more train, validation, and test size as well) and capturing the structure of the data would help with training a better model -- at least I think.

It's not strictly a time series, although one could view it that way. The data events are not tied to each other in time, necessarily. Another poster suggested a time series analysis, which I will look into.

Unfortunately, when I try to use the simulation feature of the Profiler and generate the larger data sets, the new output data doesn't show any of the related structure of the original data. I can build a new model on it that fits very well, but when I compare the new model to the original model (built on the original data), using the original data, the new model is not as good of a fit.

Sorry I can't be more specific, but I can't discuss the details of the data. I hope my comments make sense to the points you brought up.

Thanks!

From what I'm wanting to do, the simulation feature in Profiler seems the right way to go, but it's not giving me what I'm trying to get. The Platorm Simulation is definitely not the right way to go, or at least I can see how to adjust it to give me what I'm after.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Simulating data to generate larger data set for building models

So the response is a time-dependent signal? Are there predictors or is it the auto-correlation that is important?

What do you mean by "Unfortunately, when I try to use the simulation feature of the Profiler and generate the larger data sets, the new output data doesn't show any of the related structure of the original data." What is the nature of the desired structure?

How is the data different from a time series?

I don't think that the simulator in the Prolfier or the JMP Pro Simulate platform feature will satisfy your needs. A column formula or a script will likely be the most satisfying approach.

You can't disclose some details (understood) so we have to play "twenty questions!"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Simulating data to generate larger data set for building models

Hi Mark,

The data are not time-dependent. Each measurement is independent of the one previous, but in general the data are collected one after another. They could be considered a time-series, but not strictly speaking as they might not technically be sequential. More importantly though, each measurement is an independent measurement of a repsonse.

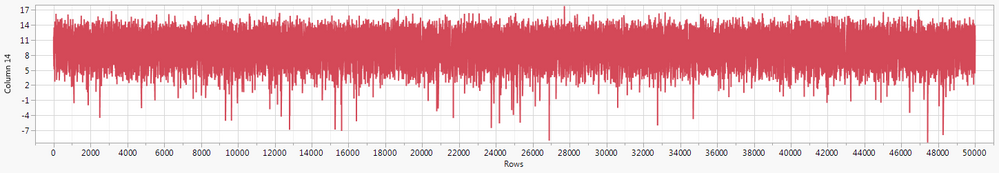

Hopfully, the visual below will explain what I mean about the simulated data losing any structure:

I generated that by opening the simulator in Profiler, giving each predictor (I have several) a normal distribution with a realistic SD (derived from the actual data), and generating a table with 50K responses. Although the average, maxs, and mins might be close to what the original data looks like, the other structure is lost. This new data set doesn't contain the same kind of "long range" structure the original one does, and it's this structure that is equally important to capture in a simulated data set as is the actual values of the data. It doesn't have to match exactly, but at least have a similar structure (see original post for comparison).

I agree that the two simulator options built into JMP are not appropriate. Any suggestions on a specific topic to read up on for the column formula or writing a script? I am not familiar with any of the built-in formulas that could take care of this for me. I am fine with scripting such a thing, but would need to know where to start. I still need to look into the other suggestion from Peter Bartell about the functional data explorer and ARIMA modeling to see if those would handle it.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Simulating data to generate larger data set for building models

Given the observations are not a time series I withdraw my earlier recommendations regarding Functional Data Explorer or time series analysis. Both techniques assume some structure ACROSS time between the observations...and since these observations are independent of each other...using FDE or time series is not recommended.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Simulating data to generate larger data set for building models

Hi Peter,

Do either of those platforms allow for maintaining the long range structure? I'll still read up on them, it might give me some ideas on how to code for my own solution.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Simulating data to generate larger data set for building models

@SDF1 : Your phrase 'long range structure' can have many flavors, nuances, and issues attached. For example seasonality can be one form of structure. ARIMA time series methods are VERY well suited for modeling seasonality effects and long term trend or cyclical movement in a process. See the classic seriesg.jmp data table in the JMP Sample Data Directory.

My suggestion for starters is to read up on JMP/JMP Pro's capabilities within the JMP documentation. Here's a link for FDE:

https://www.jmp.com/support/help/14/functional-data-explorer.shtml

And here's a link for time series analysis:

https://www.jmp.com/support/help/14/time-series-analysis.shtml

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us