- Learn how to build custom Python data connectors and further customize JMP’s Data Connector Framework with the Python Data Connector Demo, available now in the JMP Marketplace!

- See how to create experiments to support product design and ID useful product features. Register for June 12 webinar, 2pm US Eastern Time.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Question about Stability Analysis (ANCOVA) and the pooled MSE

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Question about Stability Analysis (ANCOVA) and the pooled MSE

Good day: I have a question about the Stability Analysis platform in JMP.

For those of you who are not familiar with Stability Analysis (in the Analyze/Reliability and

Survival/Degradation menu) in the Pharmaceutical Industry, in its basic form it is ANCOVA with one

categorical factor (batch) and one covariate (time). From a model selection perspective, and starting with the

full model, the idea is to test the interaction term (batch*time)…if the p-value is <0.25, then the final model

used to estimate shelf life is “Model 1” (includes the terms batch, time, and batch*time) as described below.

Alpha is set at 0.25 (a regulatory requirement) for batch related terms to increase power to detect differences

between batches.

In “help” I see the following.

“When Model 1 (different slopes and different intercepts) is used for estimating the expiration date, the MSE (mean squared error) is not pooled across batches. Prediction intervals are computed for each batch using individual mean squared errors, and the interval that crosses the specification limit first is used to estimate the expiration date.” My question is this (I posed this question to JMP as well); for Model 1 (as defined above) why not use the pooled MSE?

JMP's response was: "Our development team chose to match Chow’s codes. I also believe this method was implemented to maintain consistency with results from a SAS macro called STAB that was written by FDA researchers."

In JMP help, they reference: Chow, Shein-Chung. (2007), Statistical Design and Analysis of Stability Studies.

I do not have a copy of the referenced text, and the FDA macros mentioned are decades old now.

Assuming homogeneity of variance, can anyone enlighten me on why the pooled MSE is not appropriate in this case (but it is in other two models considered: Common Intercept/Common Slope, Different Intercepts/Common Slope)?

I'm using JMP v13 (and it was this way in previous versions as well).

Kind Regards

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Question about Stability Analysis (ANCOVA) and the pooled MSE

Use of the pooled MSE is not necessarily not appropriate in the case of different slopes and different intercepts, it is just not the way it was implemented in the stability platform in JMP. As was previously stated, "Our development team chose to match Chow’s codes. I also believe this method was implemented to maintain consistency with results from a SAS macro called STAB that was written by FDA researchers."

However, if the analyst would prefer to analyze this data with a pooled MSE, this is the approach that the Fit Model platform, Standard Least Squares personality uses. You can see an example of an ANCOVA using the Fit Model platform in the JMP Fitting Linear Models book: Analysis of Covariance with Unequal Slopes Example .

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Question about Stability Analysis (ANCOVA) and the pooled MSE

I am happy to share that we plan to implement allowing the use of the pooled MSE in the Stability platform for next version of JMP.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Question about Stability Analysis (ANCOVA) and the pooled MSE

Use of the pooled MSE is not necessarily not appropriate in the case of different slopes and different intercepts, it is just not the way it was implemented in the stability platform in JMP. As was previously stated, "Our development team chose to match Chow’s codes. I also believe this method was implemented to maintain consistency with results from a SAS macro called STAB that was written by FDA researchers."

However, if the analyst would prefer to analyze this data with a pooled MSE, this is the approach that the Fit Model platform, Standard Least Squares personality uses. You can see an example of an ANCOVA using the Fit Model platform in the JMP Fitting Linear Models book: Analysis of Covariance with Unequal Slopes Example .

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Question about Stability Analysis (ANCOVA) and the pooled MSE

I am happy to share that we plan to implement allowing the use of the pooled MSE in the Stability platform for next version of JMP.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Question about Stability Analysis (ANCOVA) and the pooled MSE

This is excellent news indeed! Thanks Sam for the heads up, and thanks for bringing JMP’s stability platform up to be consistent with best statistical practice and agency/ICH guidance (ICH Q1E).

kind regards,

Mark

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Question about Stability Analysis (ANCOVA) and the pooled MSE

Hi Sam,

That is good news.

Was the pooled mse implementation included in JMP Version 17, or will it be included in a 17 sub-version (e.g., 17.2, 17.3, etc.), or will it be included in Version 18?

Thanks

Charlie

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Question about Stability Analysis (ANCOVA) and the pooled MSE

Hi Charlie: It is included in version 17 (17.0.0). Good news indeed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Question about Stability Analysis (ANCOVA) and the pooled MSE

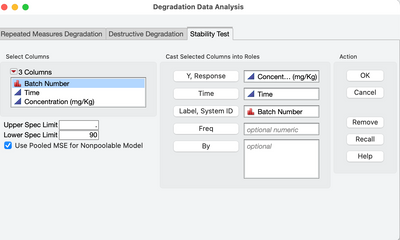

It is in version 17.0. See screenshot with new checkbox in the launch dialog that turns this new feature on

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us