- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Oneway ANOVA - Means for Oneway Anova

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Oneway ANOVA - Means for Oneway Anova

Hi everyone,

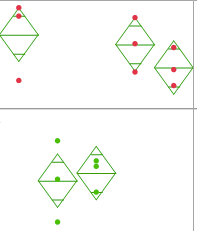

I'm starting to use JMP and I'm doing an ANOVA, using four groups. I created the diamond-shaped means and noticed that the diamonds have the same size for all 4 groups. However, the groups are quite different regarding the distribution of their samples. I also noticed that the CI has the same range for all groups (Upper 95% - Lower 95%).

Is this how is supposed to be or do I have to change any settings?

In attachamend you can find a print of my data.

Thank you!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Oneway ANOVA - Means for Oneway Anova

Assuming that you used the Fit Y by X platform, would it be posible for you to display the means and standard deviation table?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Oneway ANOVA - Means for Oneway Anova

Yes, I did use the Fit Y by X.

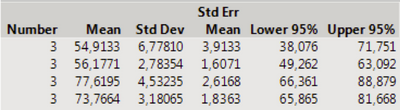

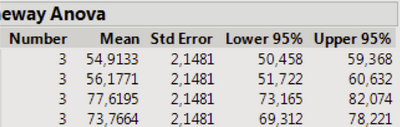

In attachement there are both the mean and standard deviation table, as well as the means for oneway anova table.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Oneway ANOVA - Means for Oneway Anova

Hi @MRT,

The analysis of variance relies on a pooled within-cell error term (the mean square error of the model) as the estimate of the population variance (which is one reason why the assumption of homogeneity of variance is important for an ANOVA). The implication of this is that confidence intervals formed around individual means use the same estimate of the population variance to calculate the standard error, so if you have the same number of individuals in each group you will then have confidence intervals of the same width. I hope this helps!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Oneway ANOVA - Means for Oneway Anova

You might have gotten the impression that "the groups are quite different regarding their distribution," but you can test that hypothesis with the ANOVA command (test for means not all equal) and with the Unequal Variance command (test for standard deviations not all equal).

Each group of data is a small sample from the five populations, so the evidence for any difference (mean or standard deviation) might be relatively weak.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Oneway ANOVA - Means for Oneway Anova

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Oneway ANOVA - Means for Oneway Anova

The ANOVA tests the null hypothesis that all the means are equal versus the alternative hypothesis that they are not all equal. The method assumes that the within group variance is the same and pools the estimate of this variance. This assumption in turn leads to width of the confidence intervals and the height of the mean diamonds (graphic for CI) to be the same.

The Unequal Variance command will test this assumption. If the variances are significantly different, then you should not use the ANOVA result or the mean diamonds to decide about the hypotheses. The same command also performs the Welsh version of the procedure that adjusts for the difference in the variances.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Oneway ANOVA - Means for Oneway Anova

Many thanks, My confusion stems from the mutitude of different tests on the "tests that variances are equal" report. I have o'brien, brow-Forsythe etc. I am not sure which of those tests is relevant or how I should interpret those( all are greater than 0.05) I also have Welch's test at the bottom which has a prob > F of 0.0187

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Oneway ANOVA - Means for Oneway Anova

You can get much of what you want by looking at the Help screen for the "Test that the Variances are Equal" output. Go to the Pull Down Menus for the output page (it will be hidden behind the bar that goes all the way across the top of the window) and select the "?" tool. Then move to the report you want to see help on, and click on it. JMP will open up the help page for that output.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Oneway ANOVA - Means for Oneway Anova

(Amended 2/22/19)

We recommend that you use the Levene test if the errors are symmetrically distributed, the Brown-Forsythe test otherwise.

That recommendation is based on simulation studies to maximize the power of the test. (I.e. lowest type II error rate or false negative rate.)

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us