- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Model-building via Best-Subsets Regression in JMP 12

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Model-building via Best-Subsets Regression in JMP 12

hi everyone.

i'm interested in knowing what the workflow is in arriving at a Reduced Model in JMP 12.

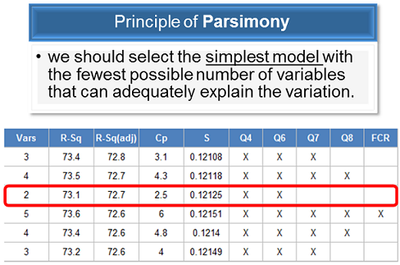

this might be of help in explaining where i'm coming from - i was taught data analysis using Minitab. in six sigma black belt class (and also reinforced as a method at work), it was taught (and practiced) that to get the Reduced Model (for continuous variables) using the principle of Parsimony we have to:

1.) fit a regression model with all factors

2.) remove factors with VIF >= 5

3.) perform best-subsets approach

4.) select subset/s with Mallows' Cp statistic < (k+1)

5.) select subset/s with high R-Sq(adj)

6.) select the simplest model with the fewest possible number of variables that can adequately explain the variation (figure below).

in our manual, the procedure above is titled "Steps Involved in Model Building".

is this workflow doable in JMP 12? how?

i know that JMP has its own procedures on Model Building. i'm currently reading and doing walk-throughs via the Visual Six Sigma book (currently @ Chapter 6) and by watching Building Better Models and other resources.

appreciate all the guidance as i transition to JMP.

- Randy, JMP newbie

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Model-building via Best-Subsets Regression in JMP 12

To build on Ian's comments. As a way of picking best subsets you would be best off using cross-validation. Cross-validation will help insure you have the best possible model with least number of terms. Two-way K-fold validation is a good place to start and if you have JMP Pro Three-way validation is even better. A reasonable rule of thumb is anything less 50 runs use K-fold and anything above 50 use Three-way cross-validation.

The link below gives some discussion on cross-validation in JMP and if you select the "Next" tab it will take you to Generalized Regression which Ian also mentions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Model-building via Best-Subsets Regression in JMP 12

I should say that there are nearly as many ways to build a useful model as there are people building them. In the end, it's the utility of the model that you end up with that is important, not so much how you got there.

JMP supports many approaches to model building, and with a little JSL, more or less any protocol that you want to follow can be supported and streamlined. The one you describe above is perfectly feasible in JMP. Note that, for step 2, to get the VIFs you need to find the 'Parameter Estimates' table in the Fit Model report, right click on it, and select 'Columns > VIF'.

Finally, regarding the modeling capabilities of JMP, the VSS book is not really definitive, just a reasonable starting point for the experience of the likely readership. If you want to have an overall view of what's possible, you should certainly check out the 'Generalised Regression' personality of Fit Model (plus supporting material). I'm not sure exactly what's covered in 'Building Better Models', but the forthcoming 2nd Edition of the VSS book has a little of this.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Model-building via Best-Subsets Regression in JMP 12

To build on Ian's comments. As a way of picking best subsets you would be best off using cross-validation. Cross-validation will help insure you have the best possible model with least number of terms. Two-way K-fold validation is a good place to start and if you have JMP Pro Three-way validation is even better. A reasonable rule of thumb is anything less 50 runs use K-fold and anything above 50 use Three-way cross-validation.

The link below gives some discussion on cross-validation in JMP and if you select the "Next" tab it will take you to Generalized Regression which Ian also mentions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Model-building via Best-Subsets Regression in JMP 12

Let me build a bit more on my colleagues Bill and Ian's comments by encouraging you to examine residual plots along the way to your 'useful' model. Lastly, use your process knowledge to inform the utility of your model...not just the regression statistics you cite. If your model suggests that water runs uphill...well that suggests the model is somehow flawed and blindly following VIF's, Rsquared values, etc...are nigh on useless.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Model-building via Best-Subsets Regression in JMP 12

thanks for all the input and for helping me in my transition to JMP, everyone!

i'm unlearning methods from the previous software and learning new ones in this community.

much appreciated.

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us