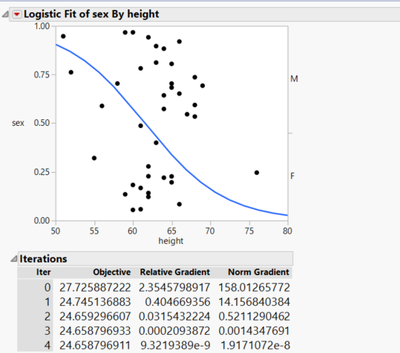

I have got some questions about the LR model.

- How does JMP initiate the values of w and b for logistic regression loss function?

- How does Relative Gradient derive(formula of this value)?

- What is the difference between Relative Gradient and NormGradient?

- Which parameter does this gradient value represent? w or b?

- Why no mention of the gradient update of the b(interception) parameter?

I just want to simulate the iteration as JMP did, the above questions stuck me from repeating this iteration. Look forward to your awesome reply!