- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Gauge variation, linearity and bias

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Gauge variation, linearity and bias

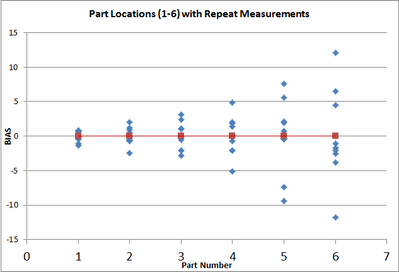

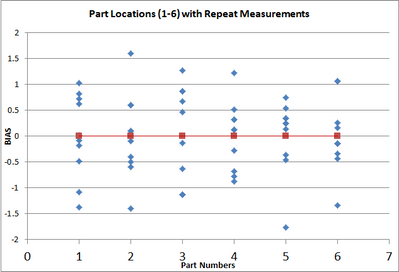

When you perform a gauge linearity study and the "mean" bias increases as you inrease along the range of measurements you are said to have a "linearity" problem with the gauge as indicated by the positive slope in the bias-scale graph. If the bias graph slope is zero, random bias, then you are said not to have a linearity problem with the gauge. But what do you can a graph with no linearity problem of the "average" bias over the range of the gauge but you do have increasing "variation" of the multiple measurements you take along the scale? In other words, if you take 10 different measurements at each point along the scale, the graph looks like the measurements are included in a in a cone shape. Do you just call it a gauge repeatability problem with a special cause of variation or is there a name for it?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Gauge variation, linearity and bias

It's a good question! You are correct that technically, in the measurement standard documents, linearity is defined as the absence of variability due to bias over the measurement range. In the JMP course notes for the MSA course, it says "Some authors include the absence of variability due to reproducibility over the measurement range." In my experience, companies that notice this problem call it linearity, and other companies don't notice the problem, so don't even ask the question. I have not heard of another term for it, in MSA particularly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Gauge variation, linearity and bias

Here is my problem. A gauge R&R is based on ANOVA, either 2 way or 3 way. One of the ANOVA's assumption is equal variances. So in the case where the variance changes over the range of the measurement device, will this cause a problem with the gauge R&R results?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Gauge variation, linearity and bias

Short answer: no.

Long answer: The ANOVA you are fitting is probably a random effects ANOVA, with the Part effect being random. Maybe you have other random terms as well, such as time effects, operator effects, materials, gauges, setups, etc. Maybe one of those effects should be the nominal value of the part. Then you can model the variance as a function of the nominal value (and other terms) and you don't have to assume equal variances. If the nominal values are really far apart, consider doing a separate analysis for each range. For example, if you have parts at 10, 1000, and 10000, you might perform three separate analyses.

In JMP, if all your effects are random, you can use the EMP or Variability platforms to get those variance components. If some effects are fixed and others are random, use Analyze > Fit Model to specify the mixed model and get the variance components.

I hope this helps!

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us