- Learn how to build custom Python data connectors and further customize JMP’s Data Connector Framework with the Python Data Connector Demo, available now in the JMP Marketplace!

- See how to use Accelerated Life Testing (ALT) to evaluate reliability. Register for June 5 webinar, 2pm US Eastern Time.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Entropy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Entropy

Hello,

I want to perform some basic descriptive statististics on time series data such as mean, median, kurtosis, etc.. but also the degree of variability over time which is described in the literature as "Entropy". I can not find this in JMPpro. Anybody a suggestion how to calculate this?

Lu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Entropy

Can you provide a literature citation or two for 'entropy'? Truth be told, I understand the definition of entropy from a physics point of view...but it's a term unfamiliar to me wrt to statistics, data visualization, or analysis. Maybe with a citation we could suggest a path forward in JMP?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Entropy

Hi,

I attached a recent publication in which entropy statistics was used on time series data; See feature engineering (page 4).

As I understand, entropy describe the change in variability over time.

Regards

Ludo

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Entropy

Hi Lu,

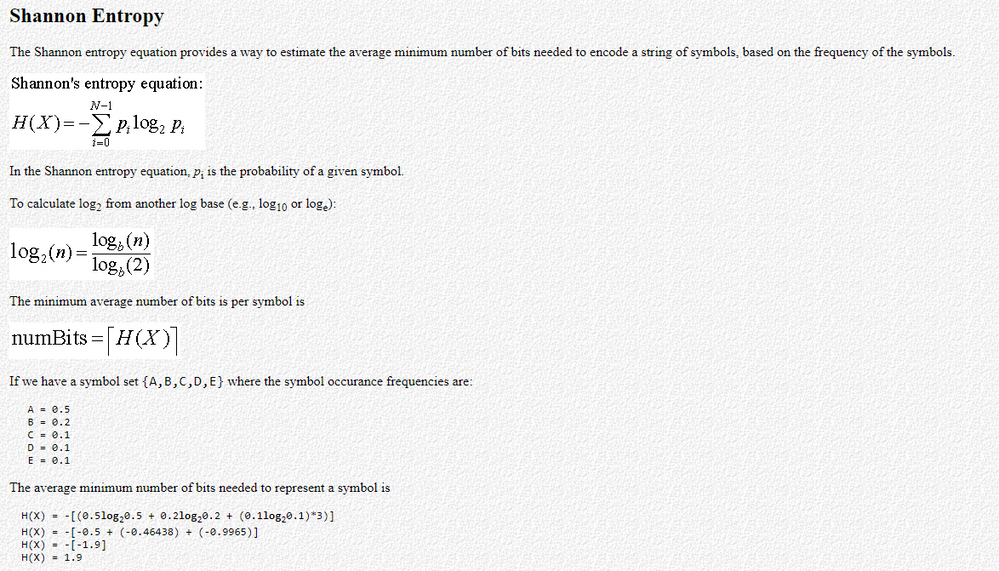

Here is the simples description I could find on how to calculate Shannon Entropy (see file attached and source information):

- Calculate the frequency of occurrence of each symbol / case = px (Distribution > Frequencies > Make Into Table)

- In the resulting table ==> New column "B"= px * Log2 (px)

- In the resulting table ==> New column "H" = - Col Sum (:B) or if you prefer to express in terms of bits : -ROUND (Col Sum (:B),0)

Best,

TS

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Entropy

I forgot to mention that this is only applicable of discrete symbols / cases because the entropy of a sequence of continuous data (i.e. real numbers) is essentially infinite.

Best,

TS

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Entropy

Cool! .zip files do this. If you've ever compressed a .zip a second time, you might have noticed it does not help; the zipped data already has nearly maximum entropy (looks random) and can't be compressed much more and may get a little bigger to hold the header information.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Entropy

Hi,

Thanks for the support, but this is not what I needed. My data are indeed continuous.

Regards

Lu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Entropy

Sorry to hear that you were thinking of something different. For my education, could you point me to a reference or resource that defines or uses Entropy for continuous variables?

Best

TS

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Entropy

Hi Thierry

See my reply to P Bartell and the attached file above.

regards

Lu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Entropy

I'll continue searching for possible reference on how Entropy is calculated for continuous variables.

Best,

TS

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us