- Learn how to build custom Python data connectors and further customize JMP’s Data Connector Framework with the Python Data Connector Demo, available now in the JMP Marketplace!

- See how to create experiments to support product design and ID useful product features. Register for June 12 webinar, 2pm US Eastern Time.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Comparison of slopes: cases more complex than 1:1 comparison

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Comparison of slopes: cases more complex than 1:1 comparison

Hello,

I'm hoping to get some help with a problem that I would imagine JMP can handle, but that I am not experienced enough to do myself currently...

I am trying to compare slopes of regression lines and conduct a test for a significant difference with a resulting p-value in cases more complex than a simple 1:1 comparison of 2 slopes.

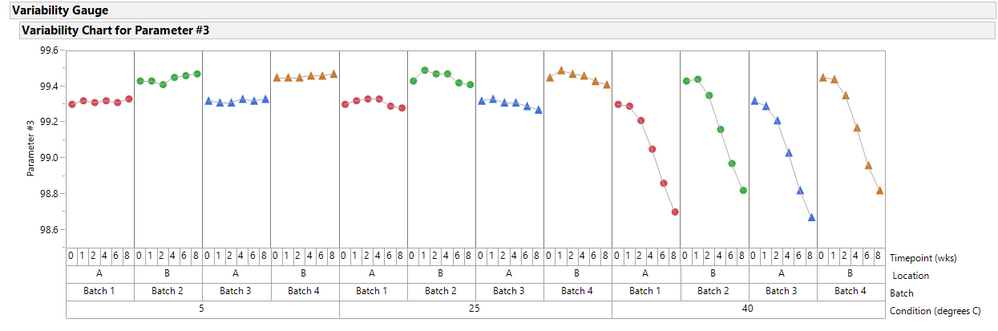

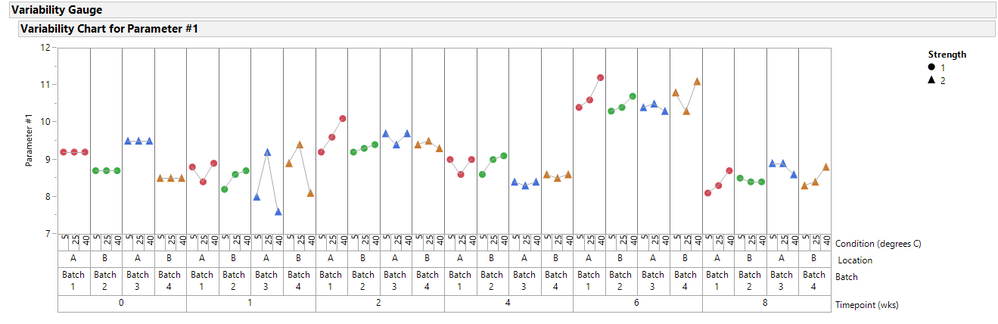

In order to make this easier for me to describe the problem and then understand and interpret the suggestions, I have attached a JMP file containing a typical data layout for the problem. This problem is from the pharma industry and involves comparison of slopes of degradation rates for drug products after stressed conditions (3 separate temperatures, 5, 25 and 40C) over time. The slope comparison is between batches produced at each of two sites/locations. The question is whether a significant difference in slope of a specific degradation parameter (data for parameters 1-3 given here) at a specific temperature (5 C, for the first case, then 25 and 40C separately) exists between Batch 1 and Batch 2 (likewise for Batch 3 vs Batch 4).

I seem to get the expected results for simple cases (i.e., Batch 1 vs Batch 2 only) but not when >1 batch from a site is involved (i.e. multiple product strengths as given here), suggesting a pooling of data or errors is required, which I am not doing. In these cases, we can assume that batches that differ by strength are chemically comparable (and possibly pooled, so Batch 1 and 3, and also 2 and 4 or potentially all 4 batches).

I'm sure tests for acceptability of pooling of the data/errors for the batches exist after which a model can be analyzed in a similar fashion to the simpler 1:1 case, however, I dont know the approaches to get started down that road...

Any thoughts on the general strategy (when pooling allowed, etc) as well as specific steps in JMP to conduct these types of more complex comparisons and watchouts or test assumptions would be greatly appreciated.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Comparison of slopes: cases more complex than 1:1 comparison

I recommend that you use Fit Least Squares (Analyze > Fit Model) with a linear predictor including the intercept, X, Batch, and X*Batch. This model will test if the slope for X depends on Batch. If it is, then you can use post hoc tests to ask about particular batches.

See Help > Books > Fitting Linear Models for more details.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Comparison of slopes: cases more complex than 1:1 comparison

I recommend that you use Fit Least Squares (Analyze > Fit Model) with a linear predictor including the intercept, X, Batch, and X*Batch. This model will test if the slope for X depends on Batch. If it is, then you can use post hoc tests to ask about particular batches.

See Help > Books > Fitting Linear Models for more details.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Comparison of slopes: cases more complex than 1:1 comparison

I suggest you look at your data globally using a Variability Graph. The first figure depicts Parameter #3. The degradation is not exactly linear. Figure 2 depicts Parameter #1, however, I dragged Timepoint to be the first grouping variable. The results for week 6 look stange. If this does not look like random variation, but possibly a metrology or calibration error?

I like to look at data first before starting any analysis, to look for data anlomalies. The script for the variability graphs is attached.

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us