- Learn how to build custom Python data connectors and further customize JMP’s Data Connector Framework with the Python Data Connector Demo, available now in the JMP Marketplace!

- See how to create experiments to support product design and ID useful product features. Register for June 12 webinar, 2pm US Eastern Time.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Check for multicollinearity in Ordinal logistic regression

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Check for multicollinearity in Ordinal logistic regression

Hello everyone! I’m running a nominal logistic Reg Model (JMP v13) which has 8 independent variables. I am concerned about collinearity and confounding. How can I check the existence of these two issues? Can you evaluate multi-collinearity with Variation inflation factor in JMP? (kindly, how?) Thanks.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Check for multicollinearity in Ordinal logistic regression

Under Help==>Statistics Index==>multicollinearity points to the Variation Inflation Factors in the Fit Model Platform, for the determination of the existence of multicollinearity.

"In regression where the regressors are highly correlated, a measure of interest is how much inflated the variance of the estimator compared with what its variance would be without the effect of the other regressors. In Fit Model the VIF is available by context-clicking in the Prameter Estimates report table."

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Check for multicollinearity in Ordinal logistic regression

A couple suggestions for you:

1. Take full advantage of version 13's improved experimental design diagnostic capabilities by taking your matrix of predictor variables and 'pretend' if indeed it wasn't, a designed experiment, and use the DOE -> Design Diagnostics -> Evaluate Designs platform. Within that platform's report you'll get all sorts of diagnostics including confounding and correlations for your predictor variables based on the predictor variable matrix. The platform doesn't require that your predictor variable matrix is indeed a 'designed experiment'. One key feature of this platform from a confounding perspective is you can specify the exact model form (main effects, 2 way interactions, etc.) you'd like to estimate.

2. Use the Multivariate platform to explore correlations (pairwise and more complex using, say principle components analysis) among the matrix of predictor variables.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Check for multicollinearity in Ordinal logistic regression

Under Help==>Statistics Index==>multicollinearity points to the Variation Inflation Factors in the Fit Model Platform, for the determination of the existence of multicollinearity.

"In regression where the regressors are highly correlated, a measure of interest is how much inflated the variance of the estimator compared with what its variance would be without the effect of the other regressors. In Fit Model the VIF is available by context-clicking in the Prameter Estimates report table."

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Check for multicollinearity in Ordinal logistic regression

--Erick

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Check for multicollinearity in Ordinal logistic regression

A couple suggestions for you:

1. Take full advantage of version 13's improved experimental design diagnostic capabilities by taking your matrix of predictor variables and 'pretend' if indeed it wasn't, a designed experiment, and use the DOE -> Design Diagnostics -> Evaluate Designs platform. Within that platform's report you'll get all sorts of diagnostics including confounding and correlations for your predictor variables based on the predictor variable matrix. The platform doesn't require that your predictor variable matrix is indeed a 'designed experiment'. One key feature of this platform from a confounding perspective is you can specify the exact model form (main effects, 2 way interactions, etc.) you'd like to estimate.

2. Use the Multivariate platform to explore correlations (pairwise and more complex using, say principle components analysis) among the matrix of predictor variables.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Check for multicollinearity in Ordinal logistic regression

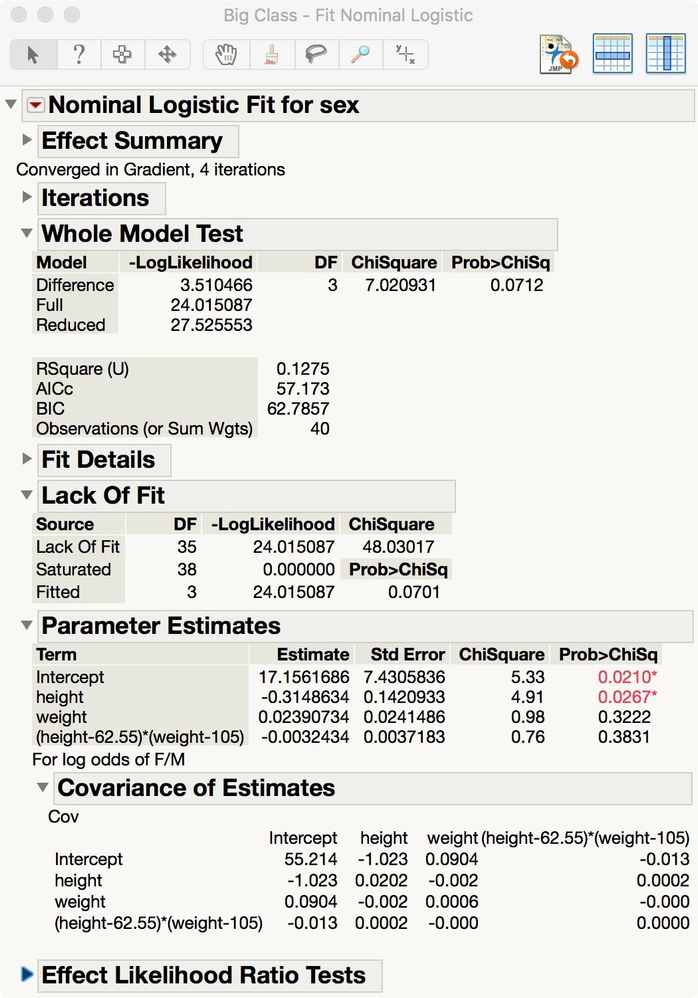

I assume that you are using the Analyze > Fit Model launch with the Nominal Logistic personality. My example from the Big Class sample data table fits sex = intercept + weight + height + weight * height. The result is:

Tthe Covariance of Estimates is available. The diagonal is the variance of the estimates and the off-diagonal are the covariances, which would be zero if there were no collinearity.

Notice that the predictors are automatically centered to minimize the correlation of the estimates.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Check for multicollinearity in Ordinal logistic regression

Mark,

Thanks. All the independent variables used in your example are continuous. Can we expect this result to hold true when the model uses also (or only) categorical variables? Kindly tell me a bit more of your explanation "Notice that the predictors are automatically centered to minimize the correlation of the estimates". Which predictors? and 'centered' in relation to what?

--Erick

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Check for multicollinearity in Ordinal logistic regression

I know this post is a few years old not, but I just encountered the same issue where a journal reviewer insisted on seeing VIF to check on collinearity in a logistic regression. None of the Generalized regression platforms (other than Normal) give VIFs, but as the VIF is completely a function of the X matrix, it doesn't matter what kind of Y variable you have. So my solution was to run exactly the same model using Fit Model with the Standard Least Squares personality, and used the VIF values from the Parameter estimates table.

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us