- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- Splitting distribution in two, with selected median and sigma for each

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Splitting distribution in two, with selected median and sigma for each

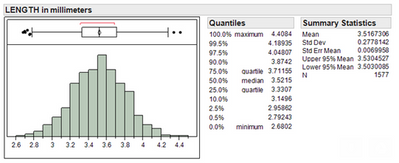

I've got a distribution of parts, for say their length in millimetres, roughly 5000 parts in total with the median of 3.53 (see below)

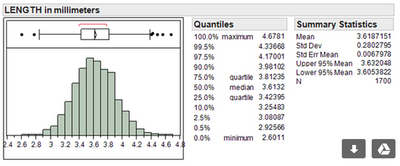

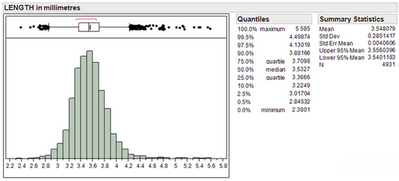

What I want to do with this distribution of parts is essentially create 2 distributions from it and run 2 evaluations on each distribution. One distribution needs to have a median of 3.52 and the other 3.62, both with similar sigma's (0.28). Parts cannot go into both groups it must be one or the other. So each distribution should look like below:

3.52 Eval distribution

3.62 Eval distribution

I currently have a method of doing this but its quite long and tedious. I was wondering is there any quick way of doing this in JMP?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Splitting distribution in two, with selected median and sigma for each

What is current method? I would guess that it can be scripted to automate.

Given the variety of possible subset combinations (there are multiple ways to generate subsets A and B which mean your criteria of median and sigma), I am not sure of one generic response.

What is your goal of these subsets?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Splitting distribution in two, with selected median and sigma for each

Scott, I am wondering why you want to split the distribution. Is the time-ordered data showing a system in or out of statistical control. Are the parts coming from different processes/machines? Why does one median have to be 3.52 and the other 3.62? What is the purpose of the study?

Best regards,

Steve

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Splitting distribution in two, with selected median and sigma for each

I need to split the distribution because both eval's will go through the same testing scenarios (next stage) but we want to see what effect the length has on the next set of test results. In order to do this I must give a set of part numbers for the 3.52 distribution and part numbers for the 3.62 distribution in order keep track of the eval groups.

Is there a way of using the random() function to manually create a distribution of parts with a set mean and sigma? and from that able to select the two groups of parts without overlapping parts into the two groups?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Splitting distribution in two, with selected median and sigma for each

Scott, check my understanding of what you are trying to do: You have a population of almost 5000 parts. You want to create two sub-populations from the parent population having medians of 3.52 and 3.62, and having similar sigma values (0.28). Then you will evaluate the two sub-populations to determine if the difference in medians has an effect on a succeeding test. If this is what you are trying to do, then I would question the statistical validity of your approach. If this is the case, then I suggest you build a DOE with length being an independent variable. I have been using Definitive Screening Designs and have found them to be very efficient.

Another point regarding the validity of your approach: If your proces for producing the 5000 parts shows a "reasonable degree of statistical control" for length, then your approach is certainly not valid. However, if the process is not stable (and the good-looking histogram does NOT confirm whether or not your process is stable because the histogram loses time-orderliness), then you can select samples from periods of high and/or low length values and compare them for evaluation. However, I would still want to do a properly coonducted DOE.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Splitting distribution in two, with selected median and sigma for each

Yea you pretty much understand what I'm trying to do. I do agree with your methods but there's a few underlying reasons why it must be done this way. Basically we cant do a DOE at this stage, we know length plays the main role into the next testing phase. Selecting samples from high parts and low parts would not have the same sigma for the length parameters that the next testing stage would see if full production quantity was tested through, so that's why we would like our sigma's similar.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Splitting distribution in two, with selected median and sigma for each

I don't think you will get the answers you need with your current approach. HOWEVER, you might try Recursive Partitioning and/or Neural Networks to "mine" the data to understand the effects of length. I have found this to be a useful approach to understanding key variables from the profiler(s) developed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Splitting distribution in two, with selected median and sigma for each

Not sure I totally understand but attached is a file that utilizes the random formula to generate the two different data sets that you described. In addition I have added the stacked data table.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Splitting distribution in two, with selected median and sigma for each

Thanks Lou but i need to take a set of real data and split this into two, your method creates two random datasets with a set mean and sigma. Nearly there

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us