- New to JMP? Let the Data Analysis Director guide you through selecting an analysis task, an analysis goal, and a data type. Available now in the JMP Marketplace!

- See how to install JMP Marketplace extensions to customize and enhance JMP.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- how to know what rows are my validation dataset when I run model with random spl...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

how to know what rows are my validation dataset when I run model with random split (JMP PRO)

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: how to know what rows are my validation dataset when I run model with random split (JMP PRO)

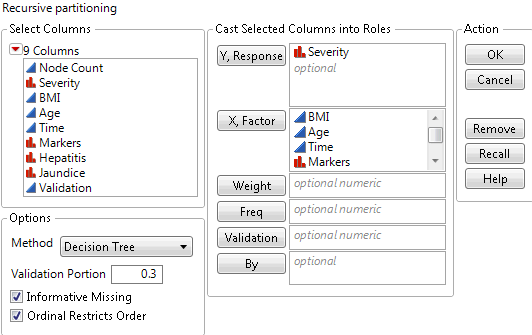

I think I see what you are doing...but I'm not 100% sure. It sounds like you might be selecting the validation % from the modeling platform dialogue launch? What I am suggesting is BEFORE modeling, using JMP Pro's Make Validation Column capability, create a column in the data table whose row values will be training, validation, and optionally, test. Then within the model launch window just place the validation column name in the Validation role and then run the platform as you would normally. See the attached example data table for what I'm suggesting...I didn't include predictor variable columns but it shows what I was suggesting in my first reply.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: how to know what rows are my validation dataset when I run model with random split (JMP PRO)

You're using the "Validation Portion" option in the Partition launch dialog.

Unfortunately, I don't see a way to identifiy which rows are used for validation with this method.

If you've got JMP Pro, I agree with @Peter_Bartell. You can create a Validation column to specify which rows are used for validation.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: how to know what rows are my validation dataset when I run model with random split (JMP PRO)

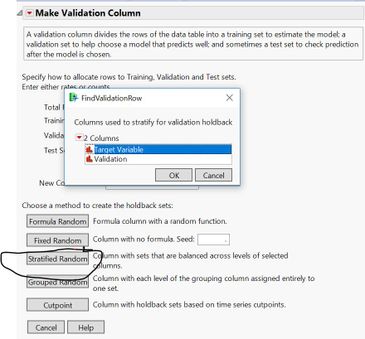

The other thing I'll suggest is if you have a categorical response, a general best practice wrt to creating the Validation column in JMP Pro's Make Validation Column capability is to select the Stratified Random option circled below, then select your target variable. This will force the randomization % you choose to apply to each level of the categorical variable...which helps prevent against model bias.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: how to know what rows are my validation dataset when I run model with random split (JMP PRO)

Off the top of my head, one visual and interactive way to determine which rows are in any of the three potential Validation column variable row categories is to use the Distribution platform to create two distributions. One distribution is your target variable, the other the validation column. Then you can just click on each bar in the distribution plot of the Validation column, observing the changes occurring in the data table as well as the target variable distributions. Other methods could apply as well like using Row Selection...but I always prefer the visual over non visual methods.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: how to know what rows are my validation dataset when I run model with random split (JMP PRO)

Peter,

Thanks for your reply. But "the other the validation column" could not be found in the data or result when it is random split for categorical variable. Row selections only could be used for numerical target variables when result show plot of actural vs predicted (by highlighting data points from plot) . But for categorical target variable, the results have ROC, Matrix etc, and I could not identify which rows are from validation part, which rows are from training parts...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: how to know what rows are my validation dataset when I run model with random split (JMP PRO)

I think I see what you are doing...but I'm not 100% sure. It sounds like you might be selecting the validation % from the modeling platform dialogue launch? What I am suggesting is BEFORE modeling, using JMP Pro's Make Validation Column capability, create a column in the data table whose row values will be training, validation, and optionally, test. Then within the model launch window just place the validation column name in the Validation role and then run the platform as you would normally. See the attached example data table for what I'm suggesting...I didn't include predictor variable columns but it shows what I was suggesting in my first reply.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: how to know what rows are my validation dataset when I run model with random split (JMP PRO)

Peter and Jeff,

Thank you all for the answers and solutions.

I used and understand the features you mentioned.

Just wondering how JMP split data randomly ( in the model of Random forest, boosted tree etc.) and from JMP random's split, I could identify rows/observations as Training/Validation for categorical target variable.

from Jeff's post, looks like JMP didn't have a way to identify them.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: how to know what rows are my validation dataset when I run model with random split (JMP PRO)

You're using the "Validation Portion" option in the Partition launch dialog.

Unfortunately, I don't see a way to identifiy which rows are used for validation with this method.

If you've got JMP Pro, I agree with @Peter_Bartell. You can create a Validation column to specify which rows are used for validation.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: how to know what rows are my validation dataset when I run model with random split (JMP PRO)

The other thing I'll suggest is if you have a categorical response, a general best practice wrt to creating the Validation column in JMP Pro's Make Validation Column capability is to select the Stratified Random option circled below, then select your target variable. This will force the randomization % you choose to apply to each level of the categorical variable...which helps prevent against model bias.

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us