- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Discussions

Solve problems, and share tips and tricks with other JMP users.- JMP User Community

- :

- Discussions

- :

- What do you do with your models after you build them in JMP?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

What do you do with your models after you build them in JMP?

- Do you deploy models built with JMP or JMP Pro into production? And if so where do those models go? Which systems do you use?

- Do you use JMP models as-is or do you re-program them into other languages? If so what languages?

- If there was one thing that JMP could do to make it easier to take a model built in JMP and put it into a production system, what would that be?

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: What do you do with your models after you build them in JMP?

Thanks for all the input. This was useful in developing the Formula Depot in JMP Pro.

Please continue to respond here to suggest additional destinations for models exported from the Formula Depot.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: What do you do with your models after you build them in JMP?

Jeff, thank you for posting your timely question on the JMP Community site. It would help if the SAS Data Step code was:

- a compiled executable that a package like Dakota (Design Analysis Kit for Optimization and Terascale Applications), DaVinci, or Galaxy could call.

- in an Excel format .

Not being a developer, options like those mentioned above would allow creating a model in JMP and easily using it in readily accessible environments.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: What do you do with your models after you build them in JMP?

I find it very useful exporting the prediction profiler to flash format. In order to do so I extract the model formula and use it in the profiler platform. Unfortunately, this is not available for nominal or ordinal logistic regression.

In addition, the logistic regression takes too long to calculate odds ratios and confidence intervals when medium size files are involved.

For example, this thing takes quite a few minutes while indicating - not responding:

(Before running this script save and close all other JMP windows since it tends to get stuck)

// get data table

dt = Open( "$SAMPLE_DATA/Hurricanes.jmp" );

// run the model

obj = Fit Model(

Y( :Storm Category ),

Effects( :Latitude, :Longitude, :Latitude * :Longitude ),

Personality( Nominal Logistic ),

Run( Likelihood Ratio Tests( 0 ), Wald Tests( 0 ) )

);

// get the confidence intervals for the estimates

obj << Confidence Intervals( 0.05 );

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: What do you do with your models after you build them in JMP?

Jeff,

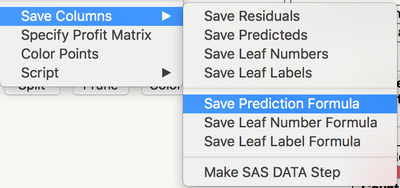

When in "working mode" I will end up with a number of models saved to a data table all with similar column names that I have to go and rename (or deal with predicted, predicted 2, predicted 3, etc.). It would be nice to have a "Save Prediction Formula with Name" option such that you could enter a model name in a dialog that would populate the resulting saved column name(s) in an appropriate manner.

Karen

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: What do you do with your models after you build them in JMP?

Jeff:

I've got one customer that deploys the predictive models they build, validate and test in JMP Pro into their internal data warehouses for marketing intelligence purposes. These models are then in production within their warehouse business intelligence systems, predicting customer behaviors (retention probability, lifetime spend, market segmentation, and on and on). So basically they build, validate, and test the models in JMP Pro, then put them into production in the business intelligence architecture. Then when the existing BI models are no longer useful, well, back to JMP Pro for repairs, modification etc. Always testing out new BI initiatives with JMP Pro...but the heavy production lifting occurs in their BI systems external to JMP Pro.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: What do you do with your models after you build them in JMP?

Same as here Peter. You kind of summarize my previous post.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: What do you do with your models after you build them in JMP?

The only difference with what I do vs. what this customer does is that, after the model development, which is tested and validated in JMP using the Model Validation under utilities prior to the development of the model and making sure that the essential stats in the validation platform make sense closer to the model itself. We assess the model after going through the processes listed above by using the model to score other customers with similar historical context/data and grab their response and graph predicted scores vs. actual relative to where each of these customers stand within their respective decile along with other business analytical units/stats. Once we are please with the assessment, the model is launch into deployment, which goes as you described either MicroStrategy. Business Objects, IBM UNICA, Salesforce, etc. There the model is available for a wide range of stakeholders who uses it for campaigns, prospecting, reactivation, segmentation for targeted campaign, etc/. So, apart from JMP's internal test and validation, it is always very essential to assess the model prior to deployment into production as that allows you to see, if at all anything needs to be added and removed or if the model needs additional improvement. No model is perfect!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: What do you do with your models after you build them in JMP?

Jeff,

We develop models maily for two reasons:

- To study the relationships and effects of model parameters on the predicted parameter. In this case the model development and analysis is done mainly in JMP and prediction formula's are only used to evaluate the modelling accuracy and predictive power (in graph builder), to compare different models (in model comparison tool) or even nested models. In some cases the model is then also exported to Excel.

- To develop a model for online implementation (monitoring or prediction). In this case the model equation is either used in our PIMS (production information management system) or in AspenIQ. AspenIQ is an Aspentech software tool that is commonly used as part of an advanced process control solution to automated a prodocution process. Our current toolset is limited to the use of linear models. We are however interested in deploying more advanced models (e.g. bootstrap forest, PLS, NN, ...). Currently, exporting these kind of models from JMP is not so straightforward (reprogramming them in another tool or language requires much more work).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: What do you do with your models after you build them in JMP?

Jeff,

If I run a model with a nominal factor that has many categories I take note of the Least Squares Means Table and rank the categories. Then I set the value ordering of that variable and change modeling type to ordinal. When I re-run the model I get a more meaningful interpretation of the coefficients, their significance and the prediction profiler is visually more informative.

It would be nice if there was an option in the model platform or the output to re run the model with categorical factors ordered in this post-hoc manner.

This also saves many custom test in order to contrast the many categories.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: What do you do with your models after you build them in JMP?

Saving the prediction equation into a JMP column is a great feature. We use it all the time.

For common usage in the future, we also cut and paste it into Excel 9and then edit). So Save Excel format option would be great. 99% of our internal clients who do not use JMP use Excel as their default data tool... (like it or not, ugh)

Recommended Articles

- © 2026 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- Contact Us