- JMP User Community

- :

- Discussions

- :

- Even though it's not statistically significant, can I conduct multiple compariso...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Even though it's not statistically significant, can I conduct multiple comparison test such as LSD?

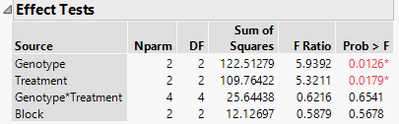

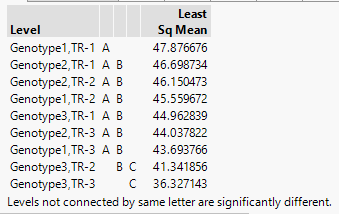

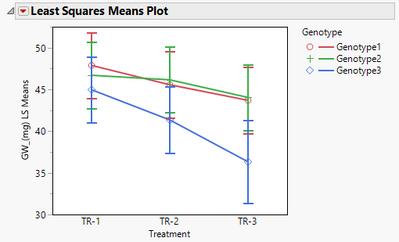

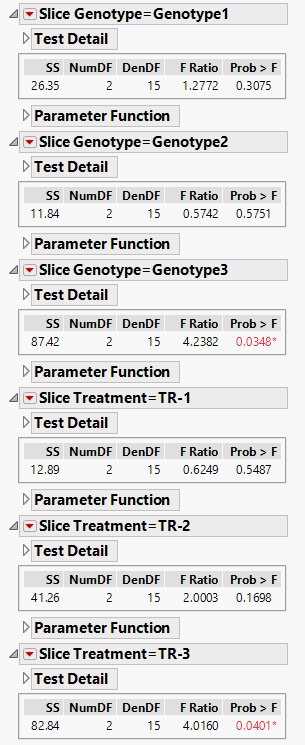

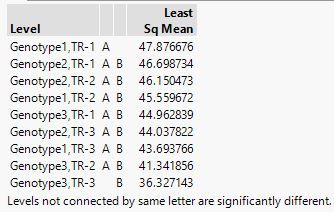

I conducted 2 way ANOVA model with blocks and the each main effect was significant, but the interaction was not significan (A). However, when I checked the LSD, it shows differences among the interaction (B). When I see the plot graph (C), it seems there are some differences visiually. Therefore, I did test slices about the interaction, and I found difference about TRs within genotype 3, and also found difference about genotypes within TR-3 (D).

In this case, can I accept LSD result and say, "genotype 1 x TR-1" interaction (47.876676) is greater than "genotype 3 x TR-3" interaction (36.327143) like the LSD result (B) indicated?

My question is... even though it's not significant statistically, if LSD result provides different letter (a,b,c..), can I use the result?

Thanks,

A) ANOVA

B) LSD

C) Plot graph

D) Test slices

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Even though it's not statistically significant, can I conduct multiple comparison test such as LSD?

Occasionally any statistical test can lead to statistically significant results even if there is no real difference. Remember, it is 95% confidence, not 100%. So if you were to conduct 20 tests, one of them would likely be statistically significant based on chance alone.

Because the ANOVA said the interaction is not significant, why would you perform a multiple comparison test to determine where the differences are? You have an answer there.

There is a famous quote (I forget the author) that many people use statistics more for support than illumination. Be careful that you do not fall into that trap. Statistics should be for illumination. I would encourage you to consider what the data is telling you. If you feel the differences should be significant and they are not, why not? Is the error in the test method too large? Perhaps the sample size was too small? Perhaps the factor changes were not a large enough magnitude to overcome the inherent variability in the process? Perhaps experimental protocol was not followed?

Experimentation is a wonderful thing as it almost always gives new avenues to explore and explain.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Even though it's not statistically significant, can I conduct multiple comparison test such as LSD?

This situation is not uncommon when using LSD. The LSD approach only considers the pairwise comparison error rates (each comparison is at your specified confidence level, usually 95%). LSD does NOT control the overall experimentwise error rate. So with 9 levels of your interaction, your experimentwise error rate (with LSD) is actually closer to 0.95^9 = 0.63 or 63% confidence on ALL of your conclusions.In other words, LSD may find differences that are not real. You can only be about 63% confident that the differences you found are real. For this reason most people consider only using LSD if the overall ANOVA is significant. Since your interaction was not significant, you should not use LSD on the interaction levels.

Another approach that many use is to use the Tukey-Kramer HSD method which controls the experimentwise error rate and would typically avoid this problem.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Even though it's not statistically significant, can I conduct multiple comparison test such as LSD?

Dear. Dan Obermiller

Thank you so much for your reply. It reall helps me understand. I have a following question.

You mentioned 'to use the Tukey-Kramer HSD method' and the result is the below

If I use Tukey HSD, can I say the result from 'Genotype1 x TR-1' is greater than from 'Genotype3 x TR-3'?

Because TR-3 is an extreme treatment I imposed. Therefore, in my common sense, it seems impossible if it is said that all results from interactions are the same. Genotype1 x TR-1 is 47.876676 and Genotype3 x TR-3 is 36.327143. The difference is more than 10, and it's a huge difference in my value of experiment. So, I'm wondering if I use Tukey HSD, can I accept the difference between Genotype1 x TR-1' and 'Genotype3 x TR-3', even though the ANOVA is not significant?

Also, if that case is acceptable, could you explain why Tukey HSD is acceptable but LSD is not?

Many thanks,

Sincerely,

JK

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Even though it's not statistically significant, can I conduct multiple comparison test such as LSD?

Occasionally any statistical test can lead to statistically significant results even if there is no real difference. Remember, it is 95% confidence, not 100%. So if you were to conduct 20 tests, one of them would likely be statistically significant based on chance alone.

Because the ANOVA said the interaction is not significant, why would you perform a multiple comparison test to determine where the differences are? You have an answer there.

There is a famous quote (I forget the author) that many people use statistics more for support than illumination. Be careful that you do not fall into that trap. Statistics should be for illumination. I would encourage you to consider what the data is telling you. If you feel the differences should be significant and they are not, why not? Is the error in the test method too large? Perhaps the sample size was too small? Perhaps the factor changes were not a large enough magnitude to overcome the inherent variability in the process? Perhaps experimental protocol was not followed?

Experimentation is a wonderful thing as it almost always gives new avenues to explore and explain.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Get Direct Link

- Report Inappropriate Content

Re: Even though it's not statistically significant, can I conduct multiple comparison test such as LSD?

Thank you so much for your comments. It makes me think more about the principle of my experiments.

Many thanks,

Sincerely,

JK

- © 2024 JMP Statistical Discovery LLC. All Rights Reserved.

- Terms of Use

- Privacy Statement

- About JMP

- JMP Software

- JMP User Community

- Contact